camel-ai/owl

🦉 OWL: Optimized Workforce Learning for General Multi-Agent Assistance in Real-World Task Automation

Loading star history...

Health Score

5.6

Weekly Growth

+0

+0.0% this week

Contributors

1

Total contributors

Open Issues

101

Generated Insights

About owl

🦉 OWL: Optimized Workforce Learning for General Multi-Agent Assistance in Real-World Task Automation

🚀 Introducing Eigent: The World's First Multi-Agent Workforce Desktop Application 🚀

Eigent empowers you to build, manage, and deploy a custom AI workforce that can turn your most complex workflows into automated tasks.

✨ 100% Open Source • 🔧 Fully Customizable • 🔒 Privacy-First • ⚡ Parallel Execution

Built on CAMEL-AI's acclaimed open-source project, Eigent introduces a Multi-Agent Workforce that boosts productivity through parallel execution, customization, and privacy protection.

中文阅读 | Community | Installation | Examples | Paper |

Citation | Contributing | CAMEL-AI

🏆 OWL achieves 69.09 average score on GAIA benchmark and ranks 🏅️ #1 among open-source frameworks! 🏆

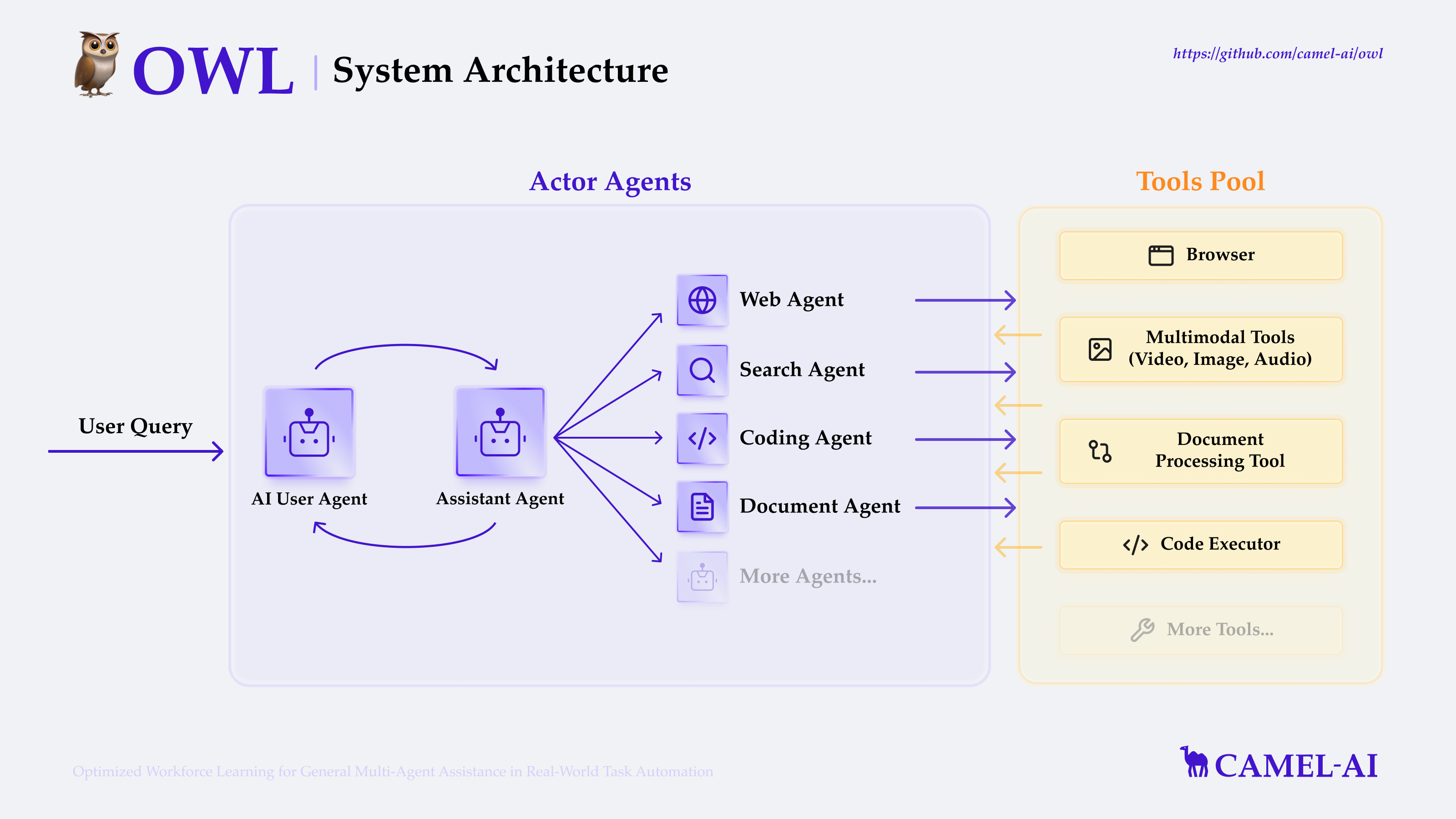

🦉 OWL is a cutting-edge framework for multi-agent collaboration that pushes the boundaries of task automation, built on top of the CAMEL-AI Framework.

Our vision is to revolutionize how AI agents collaborate to solve real-world tasks. By leveraging dynamic agent interactions, OWL enables more natural, efficient, and robust task automation across diverse domains.

📋 Table of Contents

- 📋 Table of Contents

- 🚀 Eigent: Multi-Agent Workforce Desktop Application

- 🔥 News

- 🎬 Demo Video

- ✨️ Core Features

- 🛠️ Installation

- 🚀 Quick Start

- 🧰 Toolkits and Capabilities

- 🌐 Web Interface

- 🧪 Experiments

- ⏱️ Future Plans

- 📄 License

- 🤝 Contributing

- 🔥 Community

- ❓ FAQ

- 📚 Exploring CAMEL Dependency

- 🖊️ Cite

- ⭐ Star History

🚀 Eigent: Multi-Agent Workforce Desktop Application

Eigent is revolutionizing the way we work with AI agents. As the world's first Multi-Agent Workforce desktop application, Eigent transforms complex workflows into automated, intelligent processes.

Why Eigent?

- 🤖 Multi-Agent Collaboration: Deploy multiple specialized AI agents that work together seamlessly

- 🚀 Parallel Execution: Boost productivity with agents that can work on multiple tasks simultaneously

- 🎨 Full Customization: Build and configure your AI workforce to match your specific needs

- 🔒 Privacy-First Design: Your data stays on your machine - no cloud dependencies required

- 💯 100% Open Source: Complete transparency and community-driven development

Key Capabilities

- Build Custom Workflows: Design complex multi-step processes that agents can execute autonomously

- Manage AI Teams: Orchestrate multiple agents with different specializations working in concert

- Deploy Instantly: From idea to execution in minutes, not hours

- Monitor Progress: Real-time visibility into agent activities and task completion

Use Cases

- 📊 Data Analysis: Automate complex data processing and analysis workflows

- 🔍 Research: Deploy agents to gather, synthesize, and report on information

- 💻 Development: Accelerate coding tasks with AI-powered development teams

- 📝 Content Creation: Generate, edit, and optimize content at scale

- 🤝 Business Automation: Transform repetitive business processes into automated workflows

Get Started with Eigent

Eigent is built on top of the OWL framework, leveraging CAMEL-AI's powerful multi-agent capabilities.

🔗 Visit the Eigent Repository to explore the codebase, contribute, or learn more about building your own AI workforce.

Follow our installation guide to start building your own AI workforce today!

🔥 News

🧩 NEW: COMMUNITY AGENT CHALLENGES! 🧩

Showcase your creativity by designing unique challenges for AI agents!

Join our community and see your innovative ideas tackled by cutting-edge AI.

- [2025.09.22]: Exicited to announce that OWL has been accepted by NeurIPS 2025!🚀 Check the latest paper here.

- [2025.07.21]: We open-sourced the training dataset and model checkpoints of OWL project. Training code coming soon. huggingface link.

- [2025.05.27]: We released the technical report of OWL, including more details on the workforce (framework) and optimized workforce learning (training methodology). paper.

- [2025.05.18]: We open-sourced an initial version for replicating workforce experiment on GAIA here.

- [2025.04.18]: We uploaded OWL's new GAIA benchmark score of 69.09%, ranking #1 among open-source frameworks. Check the technical report here.

- [2025.03.27]: Integrate SearxNGToolkit performing web searches using SearxNG search engine.

- [2025.03.26]: Enhanced Browser Toolkit with multi-browser support for "chrome", "msedge", and "chromium" channels.

- [2025.03.25]: Supported Gemini 2.5 Pro, added example run code

- [2025.03.21]: Integrated OpenRouter model platform, fix bug with Gemini tool calling.

- [2025.03.20]: Accept header in MCP Toolkit, support automatic playwright installation.

- [2025.03.16]: Support Bing search, Baidu search.

- [2025.03.12]: Added Bocha search in SearchToolkit, integrated Volcano Engine model platform, and enhanced Azure and OpenAI Compatible models with structured output and tool calling.

- [2025.03.11]: We added MCPToolkit, FileWriteToolkit, and TerminalToolkit to enhance OWL agents with MCP tool calling, file writing capabilities, and terminal command execution.

- [2025.03.09]: We added a web-based user interface that makes it easier to interact with the system.

- [2025.03.07]: We open-sourced the codebase of the 🦉 OWL project.

- [2025.03.03]: OWL achieved the #1 position among open-source frameworks on the GAIA benchmark with a score of 58.18.

🎬 Demo Video

https://github.com/user-attachments/assets/2a2a825d-39ea-45c5-9ba1-f9d58efbc372

This video demonstrates how to install OWL locally and showcases its capabilities as a cutting-edge framework for multi-agent collaboration: https://www.youtube.com/watch?v=8XlqVyAZOr8

✨️ Core Features

- Online Search: Support for multiple search engines (including Wikipedia, Google, DuckDuckGo, Baidu, Bocha, etc.) for real-time information retrieval and knowledge acquisition.

- Multimodal Processing: Support for handling internet or local videos, images, and audio data.

- Browser Automation: Utilize the Playwright framework for simulating browser interactions, including scrolling, clicking, input handling, downloading, navigation, and more.

- Document Parsing: Extract content from Word, Excel, PDF, and PowerPoint files, converting them into text or Markdown format.

- Code Execution: Write and execute Python code using interpreter.

- Built-in Toolkits: Access to a comprehensive set of built-in toolkits including:

- Model Context Protocol (MCP): A universal protocol layer that standardizes AI model interactions with various tools and data sources

- Core Toolkits: ArxivToolkit, AudioAnalysisToolkit, CodeExecutionToolkit, DalleToolkit, DataCommonsToolkit, ExcelToolkit, GitHubToolkit, GoogleMapsToolkit, GoogleScholarToolkit, ImageAnalysisToolkit, MathToolkit, NetworkXToolkit, NotionToolkit, OpenAPIToolkit, RedditToolkit, SearchToolkit, SemanticScholarToolkit, SymPyToolkit, VideoAnalysisToolkit, WeatherToolkit, BrowserToolkit, and many more for specialized tasks

🛠️ Installation

Prerequisites

Install Python

Before installing OWL, ensure you have Python installed (version 3.10, 3.11, or 3.12 is supported):

Note for GAIA Benchmark Users: When running the GAIA benchmark evaluation, please use the

gaia58.18branch which includes a customized version of the CAMEL framework in theowl/cameldirectory. This version contains enhanced toolkits with improved stability specifically optimized for the GAIA benchmark compared to the standard CAMEL installation.

# Check if Python is installed

python --version

# If not installed, download and install from https://www.python.org/downloads/

# For macOS users with Homebrew:

brew install [email protected]

# For Ubuntu/Debian:

sudo apt update

sudo apt install python3.10 python3.10-venv python3-pip

Installation Options

OWL supports multiple installation methods to fit your workflow preferences.

Option 1: Using uv (Recommended)

# Clone github repo

git clone https://github.com/camel-ai/owl.git

# Change directory into project directory

cd owl

# Install uv if you don't have it already

pip install uv

# Create a virtual environment and install dependencies

uv venv .venv --python=3.10

# Activate the virtual environment

# For macOS/Linux

source .venv/bin/activate

# For Windows

.venv\Scripts\activate

# Install CAMEL with all dependencies

uv pip install -e .

Option 2: Using venv and pip

# Clone github repo

git clone https://github.com/camel-ai/owl.git

# Change directory into project directory

cd owl

# Create a virtual environment

# For Python 3.10 (also works with 3.11, 3.12)

python3.10 -m venv .venv

# Activate the virtual environment

# For macOS/Linux

source .venv/bin/activate

# For Windows

.venv\Scripts\activate

# Install from requirements.txt

pip install -r requirements.txt --use-pep517

Option 3: Using conda

# Clone github repo

git clone https://github.com/camel-ai/owl.git

# Change directory into project directory

cd owl

# Create a conda environment

conda create -n owl python=3.10

# Activate the conda environment

conda activate owl

# Option 1: Install as a package (recommended)

pip install -e .

# Option 2: Install from requirements.txt

pip install -r requirements.txt --use-pep517

Option 4: Using Docker

Using Pre-built Image (Recommended)

# This option downloads a ready-to-use image from Docker Hub

# Fastest and recommended for most users

docker compose up -d

# Run OWL inside the container

docker compose exec owl bash

cd .. && source .venv/bin/activate

playwright install-deps

xvfb-python examples/run.py

Building Image Locally

# For users who need to customize the Docker image or cannot access Docker Hub:

# 1. Open docker-compose.yml

# 2. Comment out the "image: mugglejinx/owl:latest" line

# 3. Uncomment the "build:" section and its nested properties

# 4. Then run:

docker compose up -d --build

# Run OWL inside the container

docker compose exec owl bash

cd .. && source .venv/bin/activate

playwright install-deps

xvfb-python examples/run.py

Using Convenience Scripts

# Navigate to container directory

cd .container

# Make the script executable and build the Docker image

chmod +x build_docker.sh

./build_docker.sh

# Run OWL with your question

./run_in_docker.sh "your question"

Setup Environment Variables

OWL requires various API keys to interact with different services.

Setting Environment Variables Directly

You can set environment variables directly in your terminal:

-

macOS/Linux (Bash/Zsh):

export OPENAI_API_KEY="your-openai-api-key-here" # Add other required API keys as needed -

Windows (Command Prompt):

set OPENAI_API_KEY=your-openai-api-key-here -

Windows (PowerShell):

$env:OPENAI_API_KEY = "your-openai-api-key-here"

Note: Environment variables set directly in the terminal will only persist for the current session.

Alternative: Using a .env File

If you prefer using a .env file instead, you can:

-

Copy and Rename the Template:

# For macOS/Linux cd owl cp .env_template .env # For Windows cd owl copy .env_template .envAlternatively, you can manually create a new file named

.envin the owl directory and copy the contents from.env_template. -

Configure Your API Keys: Open the

.envfile in your preferred text editor and insert your API keys in the corresponding fields.

Note: For the minimal example (

examples/run_mini.py), you only need to configure the LLM API key (e.g.,OPENAI_API_KEY).

MCP Desktop Commander Setup

If using MCP Desktop Commander within Docker, run:

npx -y @wonderwhy-er/desktop-commander setup --force-file-protocol

For more detailed Docker usage instructions, including cross-platform support, optimized configurations, and troubleshooting, please refer to DOCKER_README.md.

🚀 Quick Start

Basic Usage

After installation and setting up your environment variables, you can start using OWL right away:

python examples/run.py

Running with Different Models

Model Requirements

-

Tool Calling: OWL requires models with robust tool calling capabilities to interact with various toolkits. Models must be able to understand tool descriptions, generate appropriate tool calls, and process tool outputs.

-

Multimodal Understanding: For tasks involving web interaction, image analysis, or video processing, models with multimodal capabilities are required to interpret visual content and context.

Supported Models

For information on configuring AI models, please refer to our CAMEL models documentation.

Note: For optimal performance, we strongly recommend using OpenAI models (GPT-4 or later versions). Our experiments show that other models may result in significantly lower performance on complex tasks and benchmarks, especially those requiring advanced multi-modal understanding and tool use.

OWL supports various LLM backends, though capabilities may vary depending on the model's tool calling and multimodal abilities. You can use the following scripts to run with different models:

# Run with Claude model

python examples/run_claude.py

# Run with Qwen model

python examples/run_qwen_zh.py

# Run with Deepseek model

python examples/run_deepseek_zh.py

# Run with other OpenAI-compatible models

python examples/run_openai_compatible_model.py

# Run with Gemini model

python examples/run_gemini.py

# Run with Azure OpenAI

python examples/run_azure_openai.py

# Run with Ollama

python examples/run_ollama.py

For a simpler version that only requires an LLM API key, you can try our minimal example:

python examples/run_mini.py

You can run OWL agent with your own task by modifying the examples/run.py script:

# Define your own task

task = "Task description here."

society = construct_society(question)

answer, chat_history, token_count = run_society(society)

print(f"\033[94mAnswer: {answer}\033[0m")

For uploading files, simply provide the file path along with your question:

# Task with a local file (e.g., file path: `tmp/example.docx`)

task = "What is in the given DOCX file? Here is the file path: tmp/example.docx"

society = construct_society(question)

answer, chat_history, token_count = run_society(society)

print(f"\033[94mAnswer: {answer}\033[0m")

OWL will then automatically invoke document-related tools to process the file and extract the answer.

Example Tasks

Here are some tasks you can try with OWL:

- "Find the latest stock price for Apple Inc."

- "Analyze the sentiment of recent tweets about climate change"

- "Help me debug this Python code: [your code here]"

- "Summarize the main points from this research paper: [paper URL]"

- "Create a data visualization for this dataset: [dataset path]"

🧰 Toolkits and Capabilities

Model Context Protocol (MCP)

OWL's MCP integration provides a standardized way for AI models to interact with various tools and data sources:

Before using MCP, you need to install Node.js first.

Install Node.js

Windows

Download the official installer: Node.js.

Check "Add to PATH" option during installation.

Linux

sudo apt update

sudo apt install nodejs npm -y

Mac

brew install node

Install Playwright MCP Service

npm install -g @executeautomation/playwright-mcp-server

npx playwright install-deps

Try our comprehensive MCP examples:

examples/run_mcp.py- Basic MCP functionality demonstration (local call, requires dependencies)examples/run_mcp_sse.py- Example using the SSE protocol (Use remote services, no dependencies)

Available Toolkits

Important: Effective use of toolkits requires models with strong tool calling capabilities. For multimodal toolkits (Web, Image, Video), models must also have multimodal understanding abilities.

OWL supports various toolkits that can be customized by modifying the tools list in your script:

# Configure toolkits

tools = [

*BrowserToolkit(headless=False).get_tools(), # Browser automation

*VideoAnalysisToolkit(model=models["video"]).get_tools(),

*AudioAnalysisToolkit().get_tools(), # Requires OpenAI Key

*CodeExecutionToolkit(sandbox="subprocess").get_tools(),

*ImageAnalysisToolkit(model=models["image"]).get_tools(),

SearchToolkit().search_duckduckgo,

SearchToolkit().search_google, # Comment out if unavailable

SearchToolkit().search_wiki,

SearchToolkit().search_bocha,

SearchToolkit().search_baidu,

*ExcelToolkit().get_tools(),

*DocumentProcessingToolkit(model=models["document"]).get_tools(),

*FileWriteToolkit(output_dir="./").get_tools(),

]

Available Toolkits

Key toolkits include:

Multimodal Toolkits (Require multimodal model capabilities)

- BrowserToolkit: Browser automation for web interaction and navigation

- VideoAnalysisToolkit: Video processing and content analysis

- ImageAnalysisToolkit: Image analysis and interpretation

Text-Based Toolkits

- AudioAnalysisToolkit: Audio processing (requires OpenAI API)

- CodeExecutionToolkit: Python code execution and evaluation

- SearchToolkit: Web searches (Google, DuckDuckGo, Wikipedia)

- DocumentProcessingToolkit: Document parsing (PDF, DOCX, etc.)

Additional specialized toolkits: ArxivToolkit, GitHubToolkit, GoogleMapsToolkit, MathToolkit, NetworkXToolkit, NotionToolkit, RedditToolkit, WeatherToolkit, and more. For a complete list, see the CAMEL toolkits documentation.

Customizing Your Configuration

To customize available tools:

# 1. Import toolkits

from camel.toolkits import BrowserToolkit, SearchToolkit, CodeExecutionToolkit

# 2. Configure tools list

tools = [

*BrowserToolkit(headless=True).get_tools(),

SearchToolkit().search_wiki,

*CodeExecutionToolkit(sandbox="subprocess").get_tools(),

]

# 3. Pass to assistant agent

assistant_agent_kwargs = {"model": models["assistant"], "tools": tools}

Selecting only necessary toolkits optimizes performance and reduces resource usage.

🌐 Web Interface

🚀 Enhanced Web Interface Now Available!

Experience improved system stability and optimized performance with our latest update. Start exploring the power of OWL through our user-friendly interface!

Starting the Web UI

# Start the Chinese version

python owl/webapp_zh.py

# Start the English version

python owl/webapp.py

# Start the Japanese version

python owl/webapp_jp.py

Features

- Easy Model Selection: Choose between different models (OpenAI, Qwen, DeepSeek, etc.)

- Environment Variable Management: Configure your API keys and other settings directly from the UI

- Interactive Chat Interface: Communicate with OWL agents through a user-friendly interface

- Task History: View the history and results of your interactions

The web interface is built using Gradio and runs locally on your machine. No data is sent to external servers beyond what's required for the model API calls you configure.

🧪 Experiments

To reproduce OWL's GAIA benchmark score:

Furthermore, to ensure optimal performance on the GAIA benchmark, please note that our gaia69 branch includes a customized version of the CAMEL framework in the owl/camel directory. This version contains enhanced toolkits with improved stability for gaia benchmark compared to the standard CAMEL installation.

When running the benchmark evaluation:

-

Switch to the

gaia69branch:git checkout gaia69 -

Run the evaluation script:

python run_gaia_workforce_claude.py

This will execute the same configuration that achieved our top-ranking performance on the GAIA benchmark.

⏱️ Future Plans

We're continuously working to improve OWL. Here's what's on our roadmap:

- Write a technical blog post detailing our exploration and insights in multi-agent collaboration in real-world tasks

- Enhance the toolkit ecosystem with more specialized tools for domain-specific tasks

- Develop more sophisticated agent interaction patterns and communication protocols

- Improve performance on complex multi-step reasoning tasks

📄 License

The source code is licensed under Apache 2.0.

🤝 Contributing

We welcome contributions from the community! Here's how you can help:

- Read our Contribution Guidelines

- Check open issues or create new ones

- Submit pull requests with your improvements

Current Issues Open for Contribution:

To take on an issue, simply leave a comment stating your interest.

🔥 Community

Join us (Discord or WeChat) in pushing the boundaries of finding the scaling laws of agents.

Join us for further discussions!

❓ FAQ

General Questions

Q: Why don't I see Chrome running locally after starting the example script?

A: If OWL determines that a task can be completed using non-browser tools (such as search or code execution), the browser will not be launched. The browser window will only appear when OWL determines that browser-based interaction is necessary.

Q: Which Python version should I use?

A: OWL supports Python 3.10, 3.11, and 3.12.

Q: How can I contribute to the project?

A: See our Contributing section for details on how to get involved. We welcome contributions of all kinds, from code improvements to documentation updates.

Experiment Questions

Q: Which CAMEL version should I use for replicate the role playing result?

A: We provide a modified version of CAMEL (owl/camel) in the gaia58.18 branch. Please make sure you use this CAMEL version for your experiments.

Q: Why are my experiment results lower than the reported numbers?

A: Since the GAIA benchmark evaluates LLM agents in a realistic world, it introduces a significant amount of randomness. Based on user feedback, one of the most common issues for replication is, for example, agents being blocked on certain webpages due to network reasons. We have uploaded a keywords matching script to help quickly filter out these errors here. You can also check this technical report for more details when evaluating LLM agents in realistic open-world environments.

📚 Exploring CAMEL Dependency

OWL is built on top of the CAMEL Framework, here's how you can explore the CAMEL source code and understand how it works with OWL:

Accessing CAMEL Source Code

# Clone the CAMEL repository

git clone https://github.com/camel-ai/camel.git

cd camel

🖊️ Cite

If you find this repo useful, please cite:

@misc{hu2025owl,

title={OWL: Optimized Workforce Learning for General Multi-Agent Assistance in Real-World Task Automation},

author={Mengkang Hu and Yuhang Zhou and Wendong Fan and Yuzhou Nie and Bowei Xia and Tao Sun and Ziyu Ye and Zhaoxuan Jin and Yingru Li and Qiguang Chen and Zeyu Zhang and Yifeng Wang and Qianshuo Ye and Bernard Ghanem and Ping Luo and Guohao Li},

year={2025},

eprint={2505.23885},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2505.23885},

}

⭐ Star History

Discover Repositories

Search across tracked repositories by name or description