OpenBMB/MiniCPM-o

A Gemini 2.5 Flash Level MLLM for Vision, Speech, and Full-Duplex Multimodal Live Streaming on Your Phone

Loading star history...

Health Score

75

Weekly Growth

+0

+0.0% this week

Contributors

1

Total contributors

Open Issues

84

Generated Insights

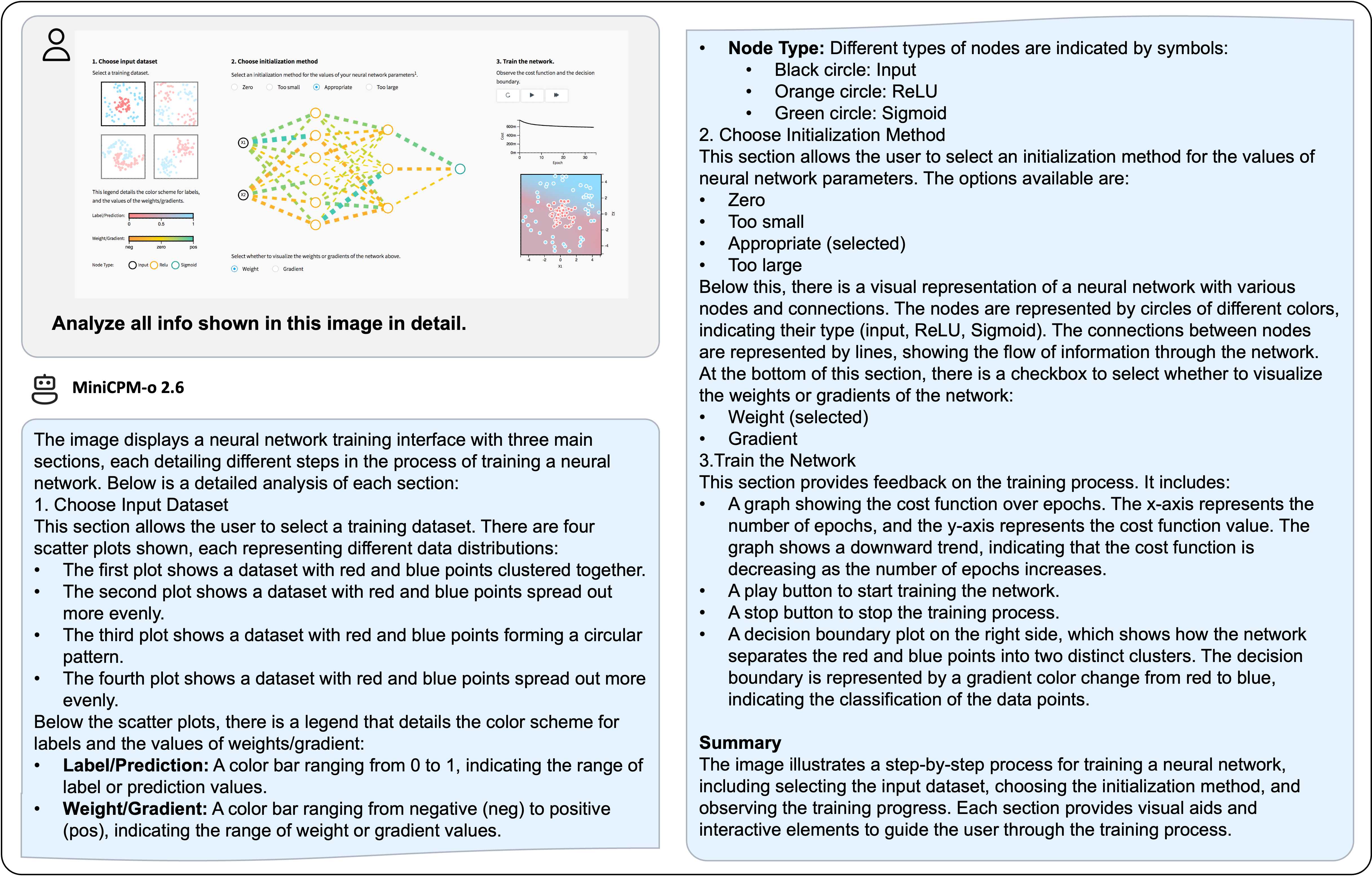

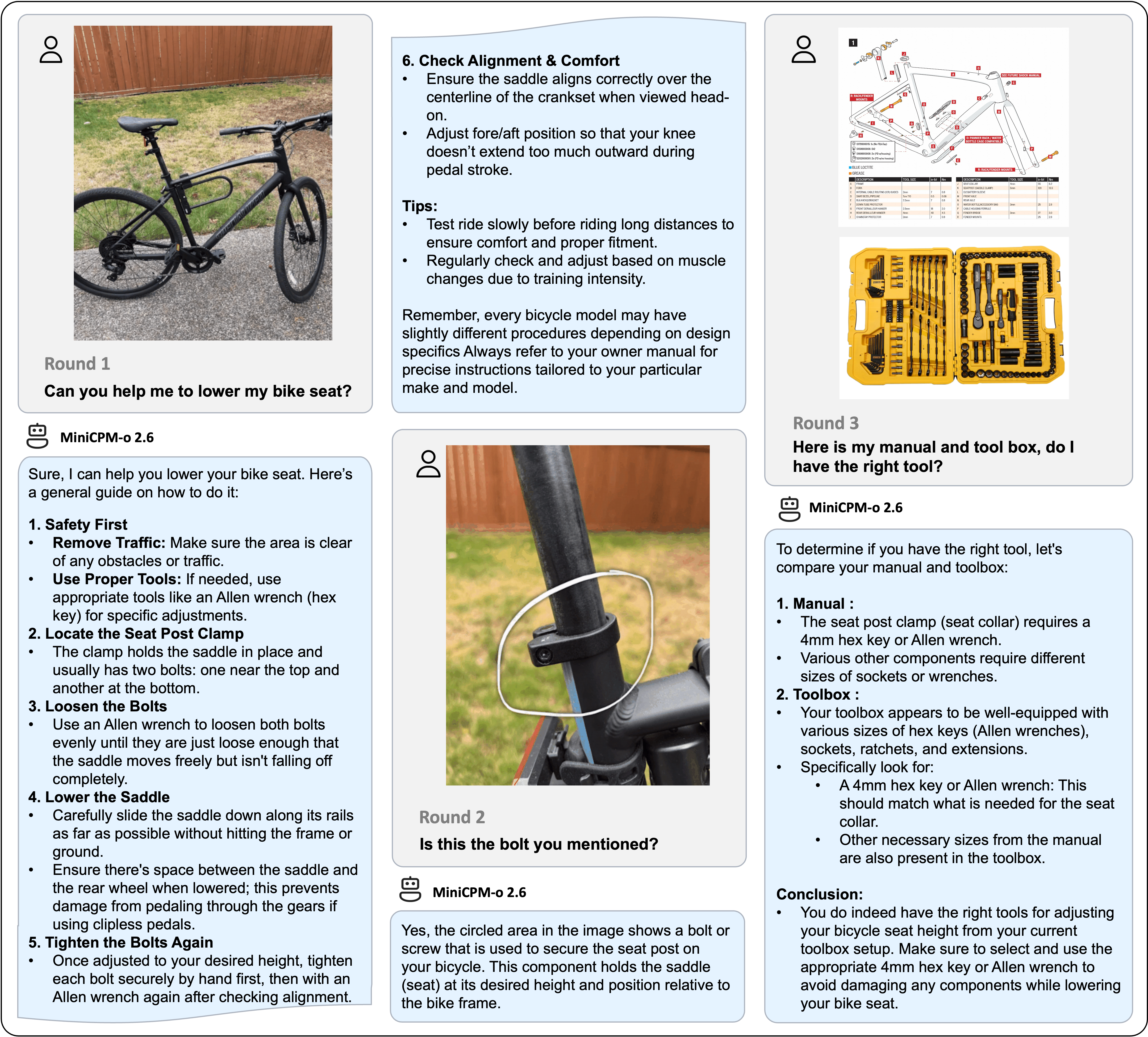

About MiniCPM-o

A GPT-4o Level MLLM for Single Image, Multi Image and Video Understanding on Your Phone

中文 | English

WeChat |

WeChat |

Discord

Discord

MiniCPM-V 4.5 🤗 🤖 | MiniCPM-o 2.6 🤗 🤖 | 🍳 Cookbook | 📄 Technical Report (Coming Soon)

MiniCPM-V is a series of efficient end-side multimodal LLMs (MLLMs), which accept images, videos and text as inputs and deliver high-quality text outputs. MiniCPM-o additionally takes audio as inputs and provide high-quality speech outputs in an end-to-end fashion. Since February 2024, we have released 7 versions of the model, aiming to achieve strong performance and efficient deployment. The most notable models in the series currently include:

-

MiniCPM-V 4.5: 🔥🔥🔥 The latest and most capable model in the MiniCPM-V series. With a total of 8B parameters, this model outperforms GPT-4o-latest, Gemini-2.0 Pro, and Qwen2.5-VL 72B in vision-language capabilities, making it the most performant on-device multimodal model in the open-source community. This version brings new features including efficient high refresh rate and long video understanding (up to 96x compression rate for video tokens), controllable hybrid fast/deep thinking, strong handwritten OCR and complex table/document parsing. It also advances MiniCPM-V's popular features such as trustworthy behavior, multilingual support and end-side deployability.

-

MiniCPM-o 2.6: ⭐️⭐️⭐️ The most capable model in the MiniCPM-o series. With a total of 8B parameters, this end-to-end model achieves comparable performance to GPT-4o-202405 in vision, speech, and multimodal live streaming, making it one of the most versatile and performant models in the open-source community. For the new voice mode, MiniCPM-o 2.6 supports bilingual real-time speech conversation with configurable voices, and also allows for fun capabilities such as emotion/speed/style control, end-to-end voice cloning, role play, etc. Due to its superior token density, MiniCPM-o 2.6 can for the first time support multimodal live streaming on end-side devices such as iPad.

News

📌 Pinned

-

[2025.08.26] 🔥🔥🔥 We open-source MiniCPM-V 4.5, which outperforms GPT-4o-latest, Gemini-2.0 Pro, and Qwen2.5-VL 72B. It advances popular capabilities of MiniCPM-V, and brings useful new features. Try it now!

-

[2025.08.01] ⭐️⭐️⭐️ We open-sourced the MiniCPM-V & o Cookbook! It provides comprehensive guides for diverse user scenarios, paired with our new Docs Site for smoother onboarding.

-

[2025.06.20] ⭐️⭐️⭐️ Our official Ollama repository is released. Try our latest models with one click!

-

[2025.03.01] 🚀🚀🚀 RLAIF-V, the alignment technique of MiniCPM-o, is accepted by CVPR 2025 Highlights!The code, dataset, paper are open-sourced!

-

[2025.01.24] 📢📢📢 MiniCPM-o 2.6 technical report is released! See here.

-

[2025.01.19] 📢 ATTENTION! We are currently working on merging MiniCPM-o 2.6 into the official repositories of llama.cpp, Ollama, and vllm. Until the merge is complete, please USE OUR LOCAL FORKS of llama.cpp, Ollama, and vllm. Using the official repositories before the merge may lead to unexpected issues.

-

[2025.01.19] ⭐️⭐️⭐️ MiniCPM-o tops GitHub Trending and reaches top-2 on Hugging Face Trending!

-

[2025.01.17] We have updated the usage of MiniCPM-o 2.6 int4 quantization version and resolved the model initialization error. Click here and try it now!

-

[2025.01.13] 🔥🔥🔥 We open-source MiniCPM-o 2.6, which matches GPT-4o-202405 on vision, speech and multimodal live streaming. It advances popular capabilities of MiniCPM-V 2.6, and supports various new fun features. Try it now!

-

[2024.08.17] 🚀🚀🚀 MiniCPM-V 2.6 is now fully supported by official llama.cpp! GGUF models of various sizes are available here.

-

[2024.08.06] 🔥🔥🔥 We open-source MiniCPM-V 2.6, which outperforms GPT-4V on single image, multi-image and video understanding. It advances popular features of MiniCPM-Llama3-V 2.5, and can support real-time video understanding on iPad. Try it now!

-

[2024.08.03] MiniCPM-Llama3-V 2.5 technical report is released! See here.

-

[2024.05.23] 🔥🔥🔥 MiniCPM-V tops GitHub Trending and Hugging Face Trending! Our demo, recommended by Hugging Face Gradio’s official account, is available here. Come and try it out!

Click to view more news.

-

[2025.08.02] 🚀🚀🚀 We open-source MiniCPM-V 4.0, which outperforms GPT-4.1-mini-20250414 in image understanding. It advances popular features of MiniCPM-V 2.6, and largely improves the efficiency. We also open-source the iOS App on iPhone and iPad. Try it now!

-

[2025.01.23] 💡💡💡 MiniCPM-o 2.6 is now supported by Align-Anything, a framework by PKU-Alignment Team for aligning any-to-any modality large models with human intentions. It supports DPO and SFT fine-tuning on both vision and audio. Try it now!

-

[2024.08.15] We now also support multi-image SFT. For more details, please refer to the document.

-

[2024.08.14] MiniCPM-V 2.6 now also supports fine-tuning with the SWIFT framework!

-

[2024.08.10] 🚀🚀🚀 MiniCPM-Llama3-V 2.5 is now fully supported by official llama.cpp! GGUF models of various sizes are available here.

-

[2024.07.19] MiniCPM-Llama3-V 2.5 supports vLLM now! See here.

-

[2024.06.03] Now, you can run MiniCPM-Llama3-V 2.5 on multiple low VRAM GPUs(12 GB or 16 GB) by distributing the model's layers across multiple GPUs. For more details, Check this link.

-

[2024.05.28] 🚀🚀🚀 MiniCPM-Llama3-V 2.5 now fully supports its feature in llama.cpp and Ollama! Please pull the latest code of our provided forks (llama.cpp, Ollama). GGUF models in various sizes are available here. MiniCPM-Llama3-V 2.5 series is not supported by the official repositories yet, and we are working hard to merge PRs. Please stay tuned!

-

[2024.05.28] 💫 We now support LoRA fine-tuning for MiniCPM-Llama3-V 2.5, using only 2 V100 GPUs! See more statistics here.

-

[2024.05.25] MiniCPM-Llama3-V 2.5 now supports streaming outputs and customized system prompts. Try it here!

-

[2024.05.24] We release the MiniCPM-Llama3-V 2.5 gguf, which supports llama.cpp inference and provides a 6~8 token/s smooth decoding on mobile phones. Try it now!

-

[2024.05.23] 🔍 We've released a comprehensive comparison between Phi-3-vision-128k-instruct and MiniCPM-Llama3-V 2.5, including benchmarks evaluations, multilingual capabilities, and inference efficiency 🌟📊🌍🚀. Click here to view more details.

-

[2024.05.20] We open-soure MiniCPM-Llama3-V 2.5, it has improved OCR capability and supports 30+ languages, representing the first end-side MLLM achieving GPT-4V level performance! We provide efficient inference and simple fine-tuning. Try it now!

-

[2024.04.23] MiniCPM-V-2.0 supports vLLM now! Click here to view more details.

-

[2024.04.18] We create a HuggingFace Space to host the demo of MiniCPM-V 2.0 at here!

-

[2024.04.17] MiniCPM-V-2.0 supports deploying WebUI Demo now!

-

[2024.04.15] MiniCPM-V-2.0 now also supports fine-tuning with the SWIFT framework!

-

[2024.04.12] We open-source MiniCPM-V 2.0, which achieves comparable performance with Gemini Pro in understanding scene text and outperforms strong Qwen-VL-Chat 9.6B and Yi-VL 34B on OpenCompass, a comprehensive evaluation over 11 popular benchmarks. Click here to view the MiniCPM-V 2.0 technical blog.

-

[2024.03.14] MiniCPM-V now supports fine-tuning with the SWIFT framework. Thanks to Jintao for the contribution!

-

[2024.03.01] MiniCPM-V now can be deployed on Mac!

-

[2024.02.01] We open-source MiniCPM-V and OmniLMM-12B, which support efficient end-side deployment and powerful multimodal capabilities correspondingly.

Contents

- MiniCPM-V 4.5

- MiniCPM-o 2.6

- MiniCPM-V & o Cookbook

- Chat with Our Demo on Gradio 🤗

- Inference

- Fine-tuning

- Awesome work using MiniCPM-V & MiniCPM-o

- FAQs

- Limitations

MiniCPM-V 4.5

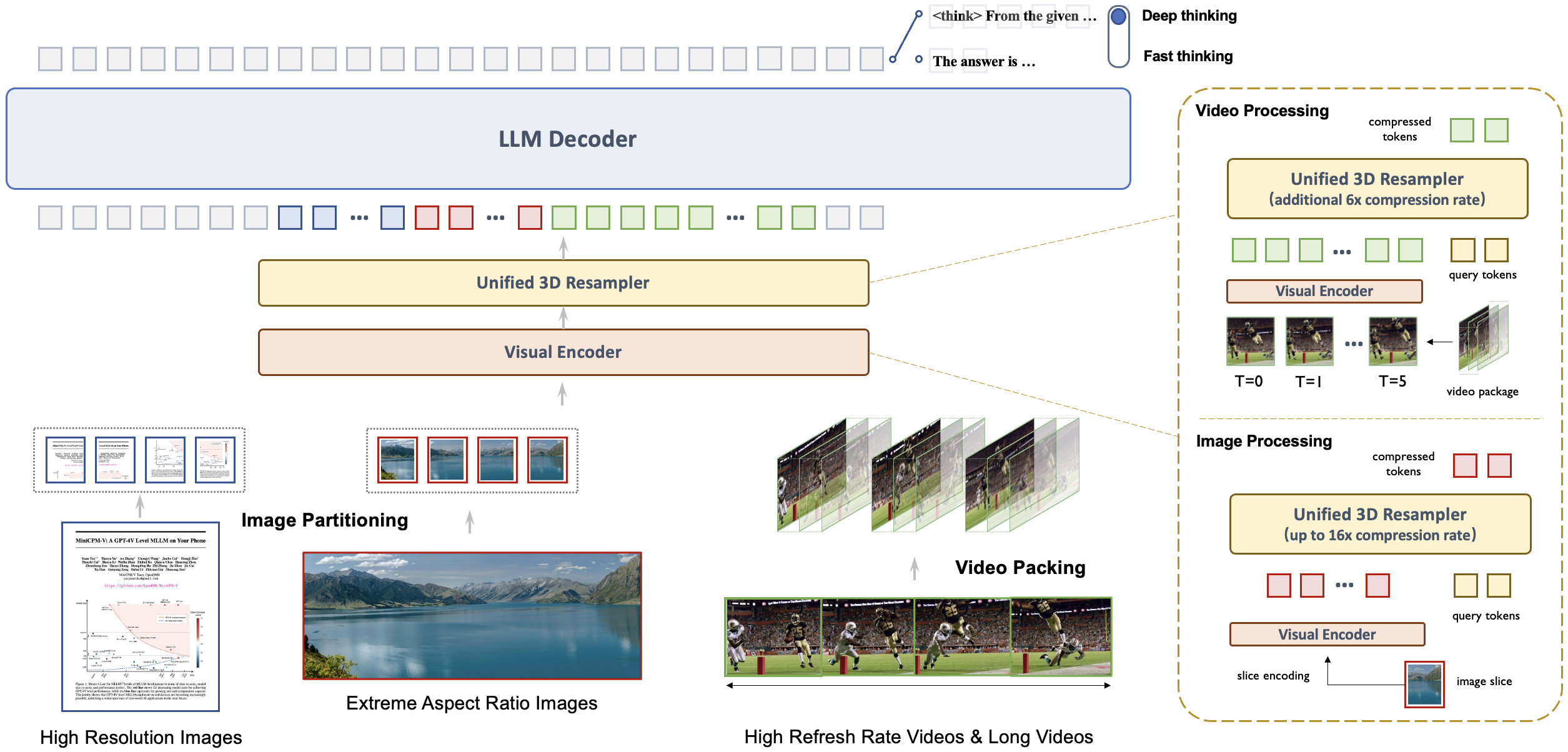

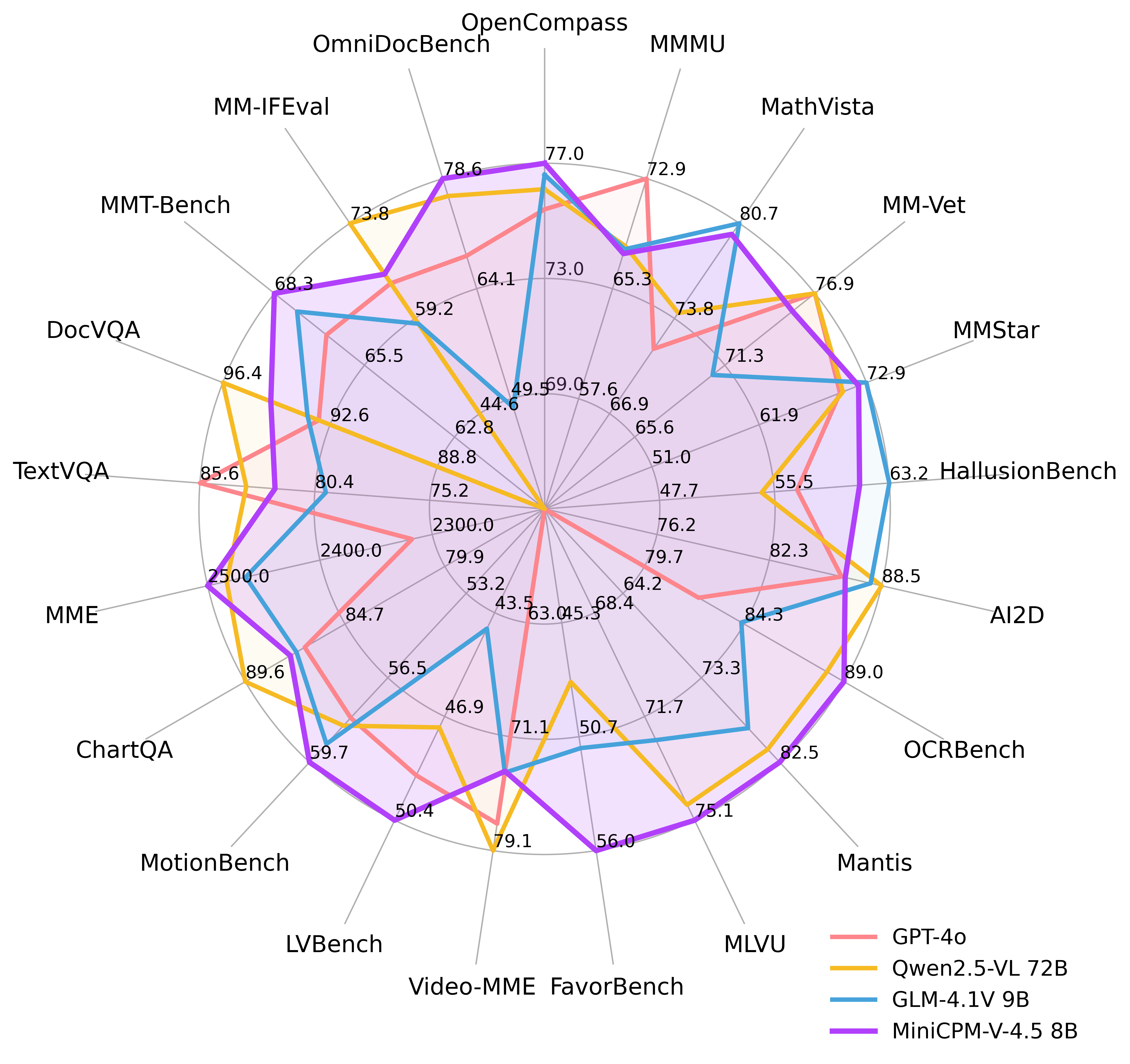

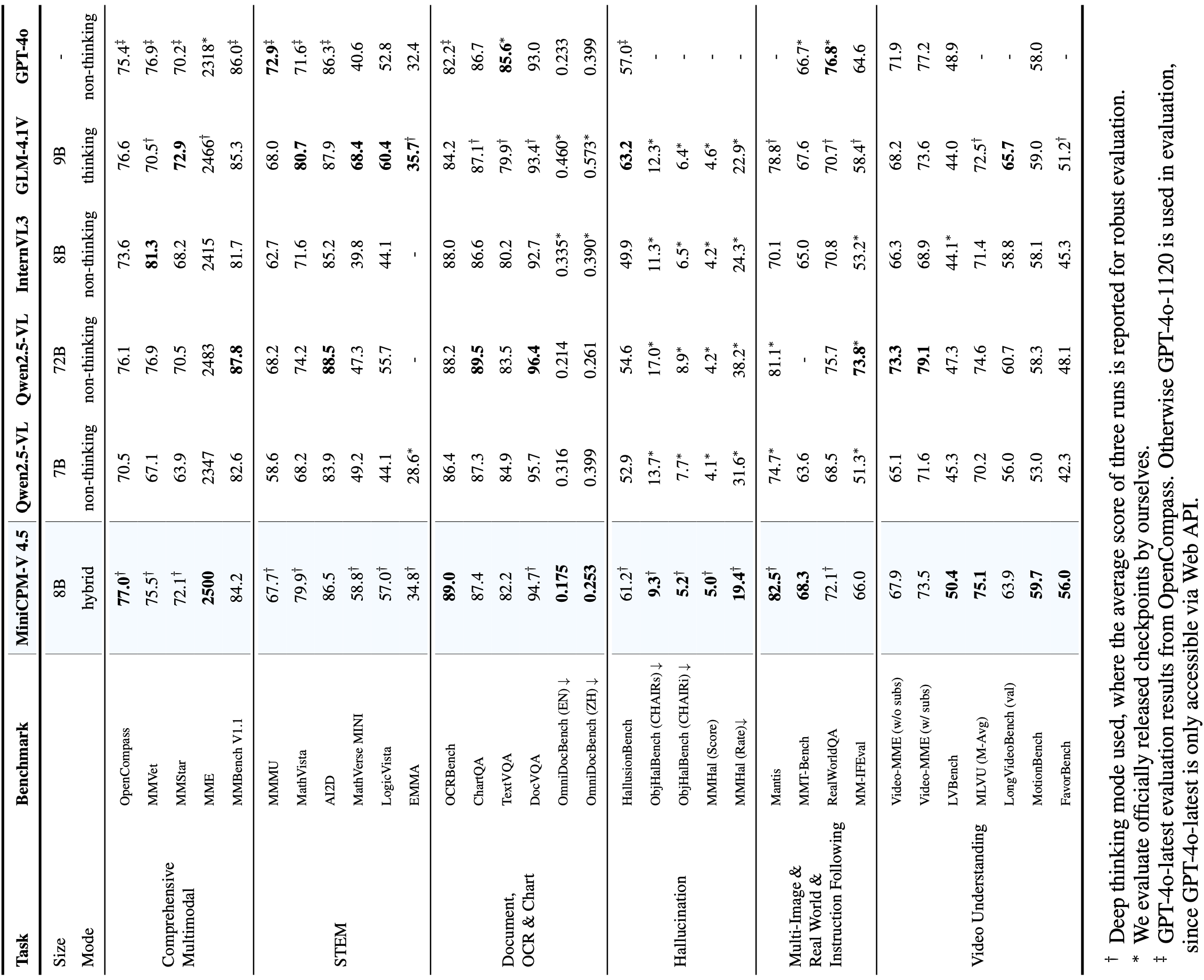

MiniCPM-V 4.5 is the latest and most capable model in the MiniCPM-V series. The model is built on Qwen3-8B and SigLIP2-400M with a total of 8B parameters. It exhibits a significant performance improvement over previous MiniCPM-V and MiniCPM-o models, and introduces new useful features. Notable features of MiniCPM-V 4.5 include:

-

🔥 State-of-the-art Vision-Language Capability. MiniCPM-V 4.5 achieves an average score of 77.0 on OpenCompass, a comprehensive evaluation of 8 popular benchmarks. With only 8B parameters, it surpasses widely used proprietary models like GPT-4o-latest, Gemini-2.0 Pro, and strong open-source models like Qwen2.5-VL 72B for vision-language capabilities, making it the most performant MLLM under 30B parameters.

-

🎬 Efficient High Refresh Rate and Long Video Understanding. Powered by a new unified 3D-Resampler over images and videos, MiniCPM-V 4.5 can now achieve 96x compression rate for video tokens, where 6 448x448 video frames can be jointly compressed into 64 video tokens (normally 1,536 tokens for most MLLMs). This means that the model can percieve significantly more video frames without increasing the LLM inference cost. This brings state-of-the-art high refresh rate (up to 10FPS) video understanding and long video understanding capabilities on Video-MME, LVBench, MLVU, MotionBench, FavorBench, etc., efficiently.

-

⚙️ Controllable Hybrid Fast/Deep Thinking. MiniCPM-V 4.5 supports both fast thinking for efficient frequent usage with competitive performance, and deep thinking for more complex problem solving. To cover efficiency and performance trade-offs in different user scenarios, this fast/deep thinking mode can be switched in a highly controlled fashion.

-

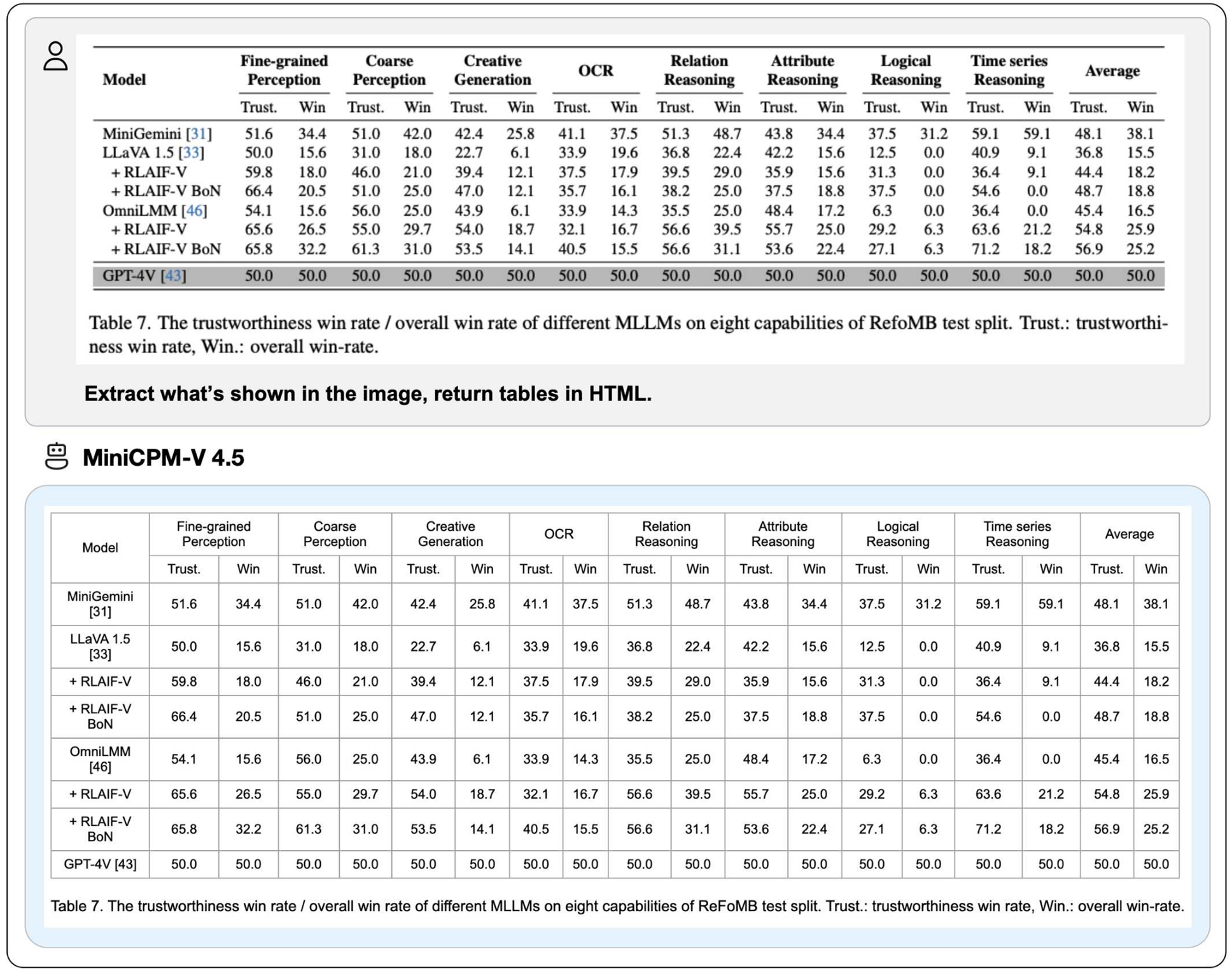

💪 Strong OCR, Document Parsing and Others. Based on LLaVA-UHD architecture, MiniCPM-V 4.5 can process high-resolution images with any aspect ratio and up to 1.8 million pixels (e.g., 1344x1344), using 4x less visual tokens than most MLLMs. The model achieves leading performance on OCRBench, surpassing proprietary models such as GPT-4o-latest and Gemini 2.5. It also achieves state-of-the-art performance for PDF document parsing capability on OmniDocBench among general MLLMs. Based on the the latest RLAIF-V and VisCPM techniques, it features trustworthy behaviors, outperforming GPT-4o-latest on MMHal-Bench, and supports multilingual capabilities in more than 30 languages.

-

💫 Easy Usage. MiniCPM-V 4.5 can be easily used in various ways: (1) llama.cpp and ollama support for efficient CPU inference on local devices, (2) int4, GGUF and AWQ format quantized models in 16 sizes, (3) SGLang and vLLM support for high-throughput and memory-efficient inference, (4) fine-tuning on new domains and tasks with Transformers and LLaMA-Factory, (5) quick local WebUI demo, (6) optimized local iOS app on iPhone and iPad, and (7) online web demo on server. See our Cookbook for full usages!

Key Techniques

-

Architechture: Unified 3D-Resampler for High-density Video Compression. MiniCPM-V 4.5 introduces a 3D-Resampler that overcomes the performance-efficiency trade-off in video understanding. By grouping and jointly compressing up to 6 consecutive video frames into just 64 tokens (the same token count used for a single image in MiniCPM-V series), MiniCPM-V 4.5 achieves a 96× compression rate for video tokens. This allows the model to process more video frames without additional LLM computational cost, enabling high refresh rate video and long video understanding. The architecture supports unified encoding for images, multi-image inputs, and videos, ensuring seamless capability and knowledge transfer.

-

Pre-training: Unified Learning for OCR and Knowledge from Documents. Existing MLLMs learn OCR capability and knowledge from documents in isolated training approaches. We observe the essential difference between these two training approaches is the visibility of the text in images. By dynamically corrupting text regions in documents with varying noise levels and asking the model to reconstruct the text, the model learns to adaptively and properly switch between accurate text recognition (when text is visible) and multimodal context-based knowledge reasoning (when text is heavily obscured). This eliminates reliance on error-prone document parsers in knowledge learning from documents, and prevents hallucinations from over-augmented OCR data, resulting in top-tier OCR and multimodal knowledge performance with minimal engineering overhead.

-

Post-training: Hybrid Fast/Deep Thinking with Multimodal RL. MiniCPM-V 4.5 offers a balanced reasoning experience through two switchable modes: fast thinking for efficient daily use and deep thinking for complex tasks. Using a new hybrid reinforcement learning method, the model jointly optimizes both modes, significantly enhancing fast-mode performance without compromising deep-mode capability. Incorporated with RLPR and RLAIF-V, it generalizes robust reasoning skills from broad multimodal data while effectively reducing hallucinations.

Evaluation

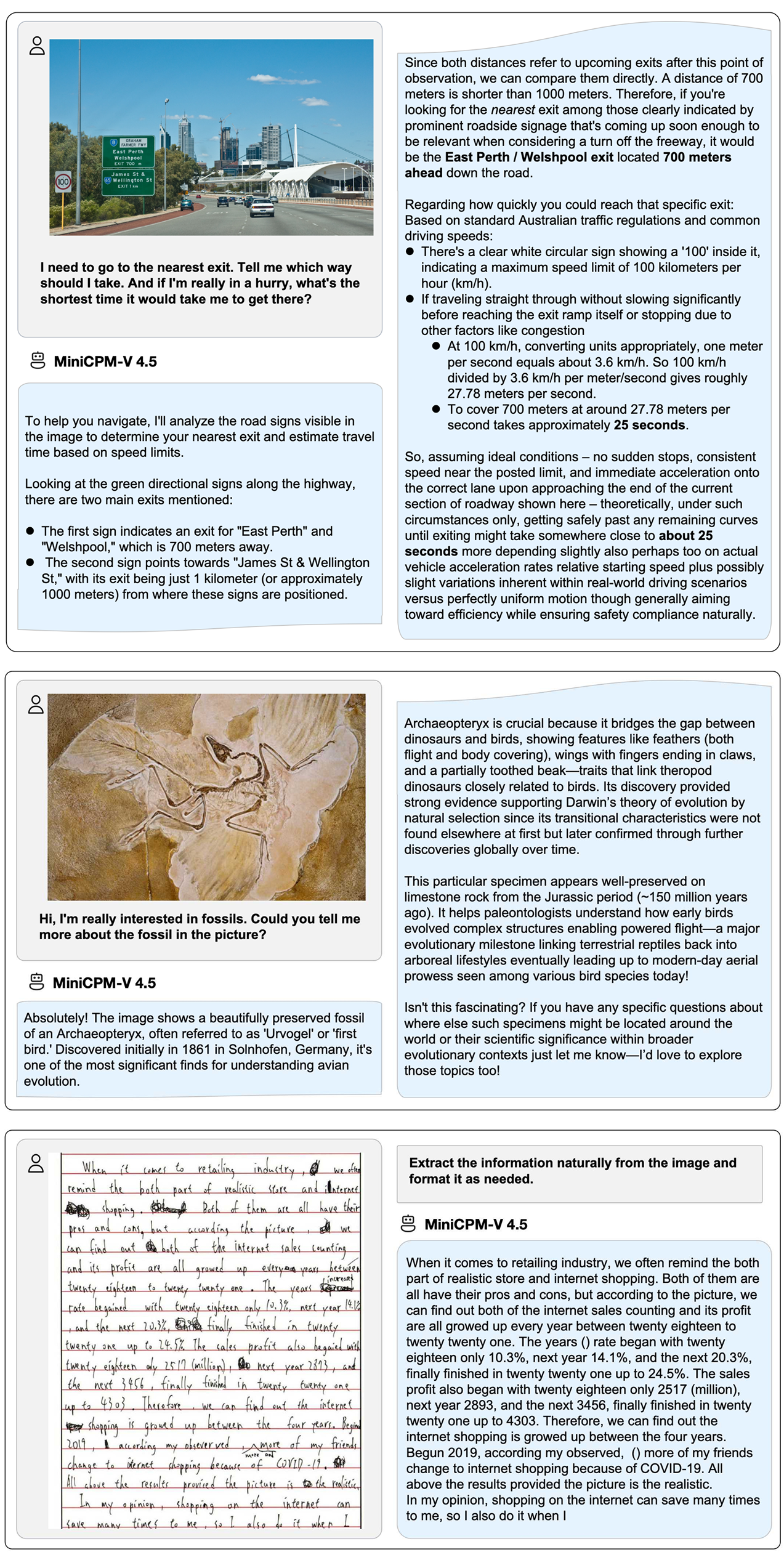

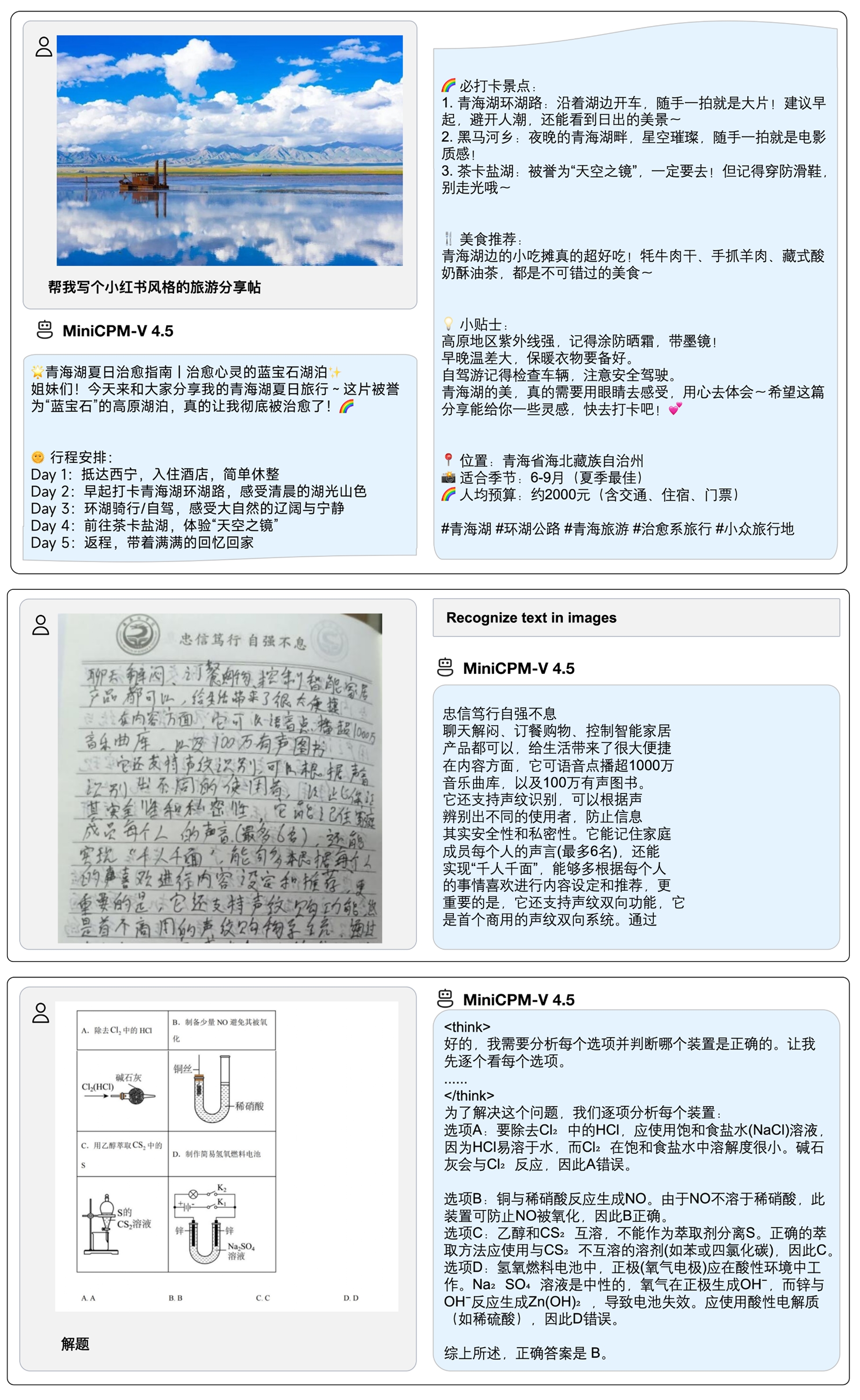

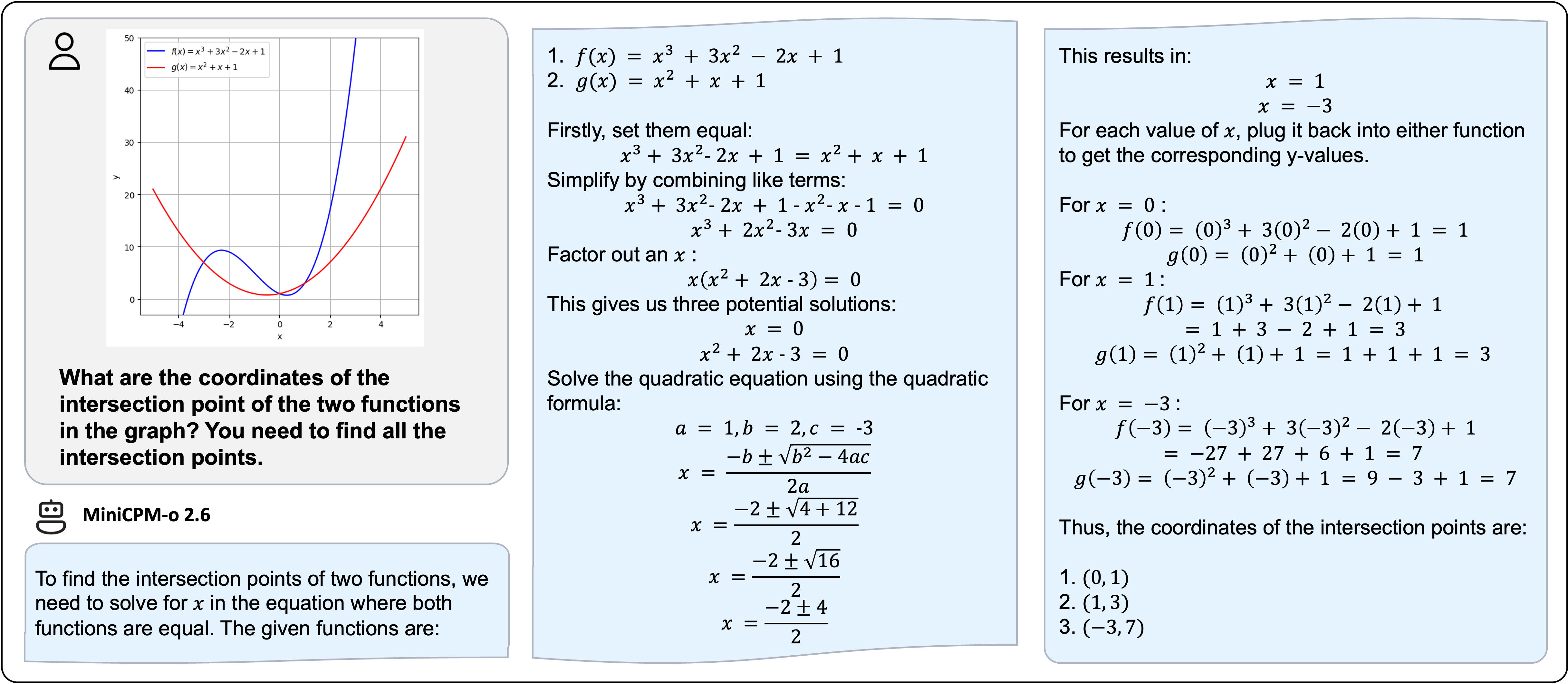

Examples

Click to view more cases.

We deploy MiniCPM-V 4.5 on iPad M4 with iOS demo. The demo video is the raw screen recording without edition.

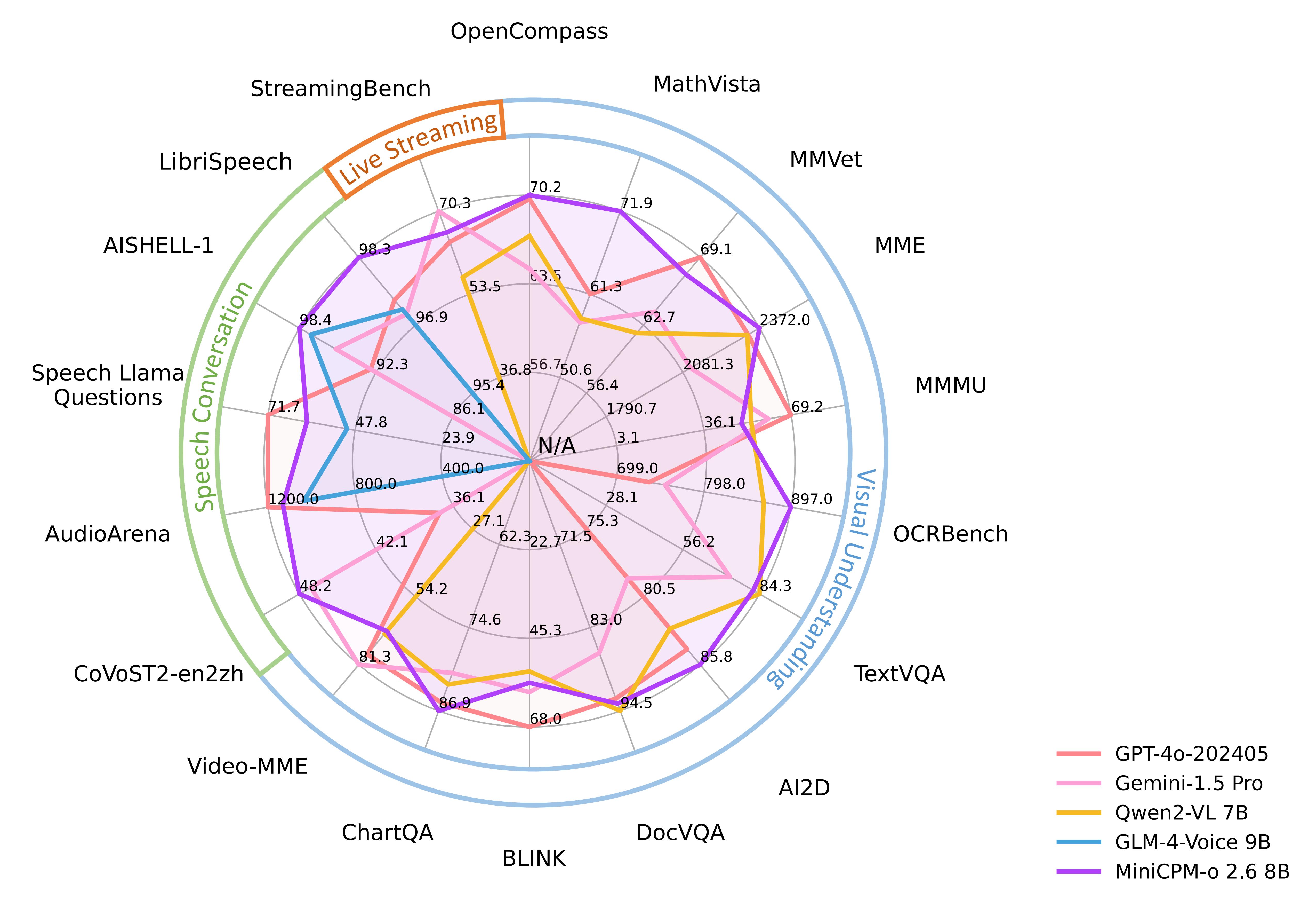

MiniCPM-o 2.6

MiniCPM-o 2.6 is the latest and most capable model in the MiniCPM-o series. The model is built in an end-to-end fashion based on SigLip-400M, Whisper-medium-300M, ChatTTS-200M, and Qwen2.5-7B with a total of 8B parameters. It exhibits a significant performance improvement over MiniCPM-V 2.6, and introduces new features for real-time speech conversation and multimodal live streaming. Notable features of MiniCPM-o 2.6 include:

-

🔥 Leading Visual Capability. MiniCPM-o 2.6 achieves an average score of 70.2 on OpenCompass, a comprehensive evaluation of 8 popular benchmarks. With only 8B parameters, it surpasses widely used proprietary models like GPT-4o-202405, Gemini 1.5 Pro, and Claude 3.5 Sonnet for single image understanding. It also outperforms GPT-4V and Claude 3.5 Sonnet in multi-image and video understanding, and shows promising in-context learning capability.

-

🎙 State-of-the-art Speech Capability. MiniCPM-o 2.6 supports bilingual real-time speech conversation with configurable voices in English and Chinese. It outperforms GPT-4o-realtime on audio understanding tasks such as ASR and STT translation, and shows state-of-the-art performance on speech conversation in both semantic and acoustic evaluations in the open-source community. It also allows for fun features such as emotion/speed/style control, end-to-end voice cloning, role play, etc.

-

🎬 Strong Multimodal Live Streaming Capability. As a new feature, MiniCPM-o 2.6 can accept continuous video and audio streams independent of user queries, and support real-time speech interaction. It outperforms GPT-4o-202408 and Claude 3.5 Sonnet and shows state-of-art performance in the open-source community on StreamingBench, a comprehensive benchmark for real-time video understanding, omni-source (video & audio) understanding, and multimodal contextual understanding.

-

💪 Strong OCR Capability and Others. Advancing popular visual capabilities from MiniCPM-V series, MiniCPM-o 2.6 can process images with any aspect ratio and up to 1.8 million pixels (e.g., 1344x1344). It achieves state-of-the-art performance on OCRBench for models under 25B, surpassing proprietary models such as GPT-4o-202405. Based on the the latest RLAIF-V and VisCPM techniques, it features trustworthy behaviors, outperforming GPT-4o and Claude 3.5 Sonnet on MMHal-Bench, and supports multilingual capabilities on more than 30 languages.

-

🚀 Superior Efficiency. In addition to its friendly size, MiniCPM-o 2.6 also shows state-of-the-art token density (i.e., the number of pixels encoded into each visual token). It produces only 640 tokens when processing a 1.8M pixel image, which is 75% fewer than most models. This directly improves the inference speed, first-token latency, memory usage, and power consumption. As a result, MiniCPM-o 2.6 can efficiently support multimodal live streaming on end-side devices such as iPads.

-

💫 Easy Usage. MiniCPM-o 2.6 can be easily used in various ways: (1) llama.cpp support for efficient CPU inference on local devices, (2) int4 and GGUF format quantized models in 16 sizes, (3) vLLM support for high-throughput and memory-efficient inference, (4) fine-tuning on new domains and tasks with LLaMA-Factory, (5) quick local WebUI demo, and (6) online web demo on server.

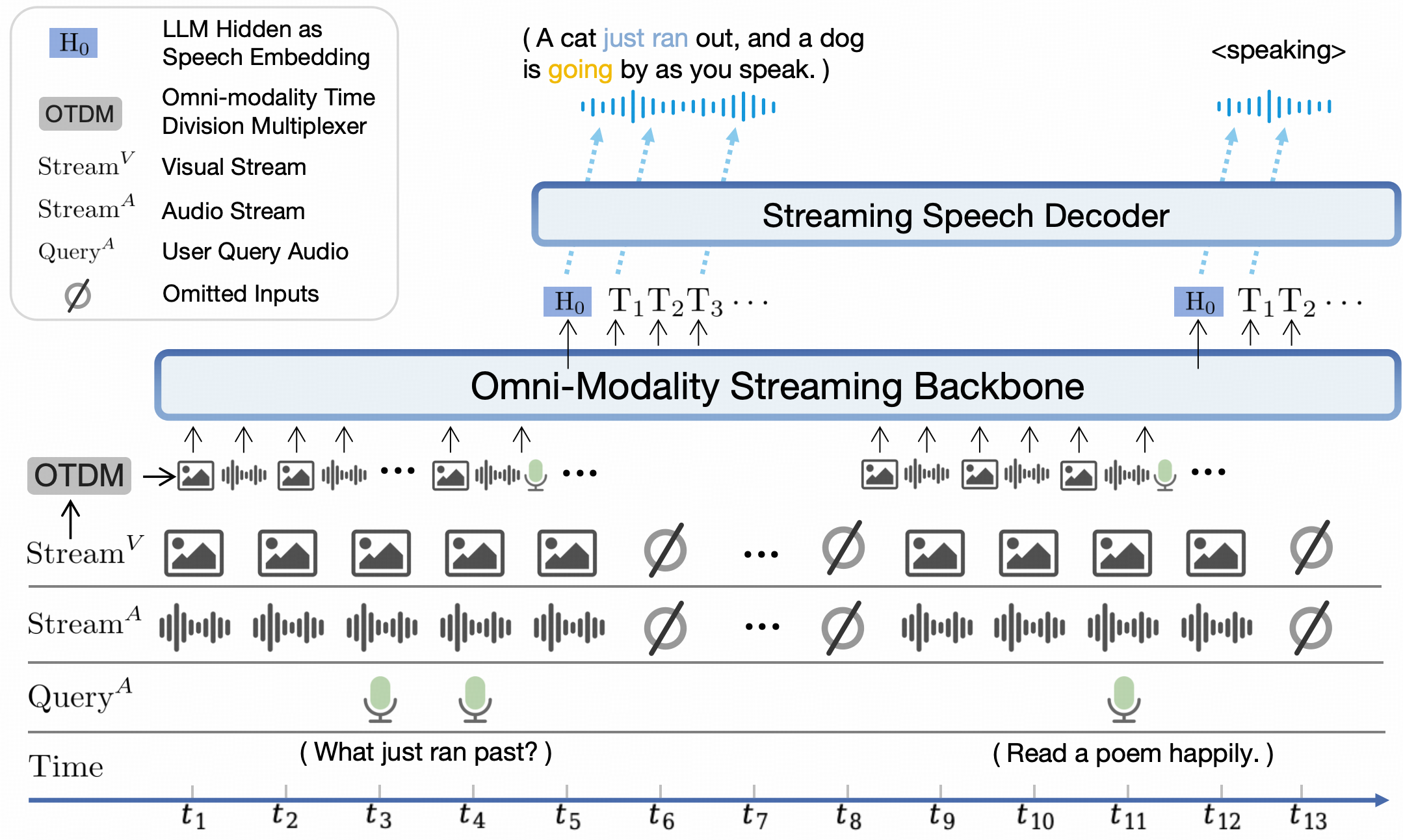

Model Architecture.

- End-to-end Omni-modal Architecture. Different modality encoders/decoders are connected and trained in an end-to-end fashion to fully exploit rich multimodal knowledge. The model is trained in a fully end-to-end manner with only CE loss.

- Omni-modal Live Streaming Mechanism. (1) We change the offline modality encoder/decoders into online ones for streaming inputs/outputs. (2) We devise a time-division multiplexing (TDM) mechanism for omni-modality streaming processing in the LLM backbone. It divides parallel omni-modality streams into sequential info within small periodic time slices.

- Configurable Speech Modeling Design. We devise a multimodal system prompt, including traditional text system prompt, and a new audio system prompt to determine the assistant voice. This enables flexible voice configurations in inference time, and also facilitates end-to-end voice cloning and description-based voice creation.

Evaluation

Click to view visual understanding results.

Image Understanding

| Model | Size | Token Density+ | OpenCompass | OCRBench | MathVista mini | ChartQA | MMVet | MMStar | MME | MMB1.1 test | AI2D | MMMU val | HallusionBench | TextVQA val | DocVQA test | MathVerse mini | MathVision | MMHal Score |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Proprietary | ||||||||||||||||||

| GPT-4o-20240513 | - | 1088 | 69.9 | 736 | 61.3 | 85.7 | 69.1 | 63.9 | 2328.7 | 82.2 | 84.6 | 69.2 | 55.0 | - | 92.8 | 50.2 | 30.4 | 3.6 |

| Claude3.5-Sonnet | - | 750 | 67.9 | 788 | 61.6 | 90.8 | 66.0 | 62.2 | 1920.0 | 78.5 | 80.2 | 65.9 | 49.9 | - | 95.2 | - | - | 3.4 |

| Gemini 1.5 Pro | - | - | 64.4 | 754 | 57.7 | 81.3 | 64.0 | 59.1 | 2110.6 | 73.9 | 79.1 | 60.6 | 45.6 | 73.5 | 86.5 | - | 19.2 | - |

| GPT-4o-mini-20240718 | - | 1088 | 64.1 | 785 | 52.4 | - | 66.9 | 54.8 | 2003.4 | 76.0 | 77.8 | 60.0 | 46.1 | - | - | - | - | 3.3 |

| Open Source | ||||||||||||||||||

| Cambrian-34B | 34B | 1820 | 58.3 | 591 | 50.3 | 75.6 | 53.2 | 54.2 | 2049.9 | 77.8 | 79.5 | 50.4 | 41.6 | 76.7 | 75.5 | - | - | - |

| GLM-4V-9B | 13B | 784 | 59.1 | 776 | 51.1 | - | 58.0 | 54.8 | 2018.8 | 67.9 | 71.2 | 46.9 | 45.0 | - | - | - | - | - |

| Pixtral-12B | 12B | 256 | 61.0 | 685 | 56.9 | 81.8 | 58.5 | 54.5 | - | 72.7 | 79.0 | 51.1 | 47.0 | 75.7 | 90.7 | - | - | - |

| VITA-1.5 | 8B | 784 | 63.3 | 741 | 66.2 | - | 52.7 | 60.2 | 2328.1 | 76.8 | 79.2 | 52.6 | 44.6 | - | - | - | - | - |

| DeepSeek-VL2-27B (4B) | 27B | 672 | 66.4 | 809 | 63.9 | 86.0 | 60.0 | 61.9 | 2253.0 | 81.2 | 83.8 | 54.0 | 45.3 | 84.2 | 93.3 | - | - | 3.0 |

| Qwen2-VL-7B | 8B | 784 | 67.1 | 866 | 58.2 | 83.0 | 62.0 | 60.7 | 2326.0 | 81.8 | 83.0 | 54.1 | 50.6 | 84.3 | 94.5 | 31.9 | 16.3 | 3.2 |

| LLaVA-OneVision-72B | 72B | 182 | 68.1 | 741 | 67.5 | 83.7 | 60.6 | 65.8 | 2261.0 | 85.0 | 85.6 | 56.8 | 49.0 | 80.5 | 91.3 | 39.1 | - | 3.5 |

| InternVL2.5-8B | 8B | 706 | 68.3 | 822 | 64.4 | 84.8 | 62.8 | 62.8 | 2344.0 | 83.6 | 84.5 | 56.0 | 50.1 | 79.1 | 93.0 | 39.5 | 19.7 | 3.4 |

| MiniCPM-V 2.6 | 8B | 2822 | 65.2 | 852* | 60.6 | 79.4 | 60.0 | 57.5 | 2348.4* | 78.0 | 82.1 | 49.8* | 48.1* | 80.1 | 90.8 | 25.7 | 18.3 | 3.6 |

| MiniCPM-o 2.6 | 8B | 2822 | 70.2 | 897* | 71.9* | 86.9* | 67.5 | 64.0 | 2372.0* | 80.5 | 85.8 | 50.4* | 51.9 | 82.0 | 93.5 | 41.4* | 23.1* | 3.8 |

+ Token Density: number of pixels encoded into each visual token at maximum resolution, i.e., # pixels at maximum resolution / # visual tokens.

Note: For proprietary models, we calculate token density based on the image encoding charging strategy defined in the official API documentation, which provides an upper-bound estimation.

Multi-image and Video Understanding

| Model | Size | BLINK val | Mantis Eval | MIRB | Video-MME (wo / w subs) |

|---|---|---|---|---|---|

| Proprietary | |||||

| GPT-4o-20240513 | - | 68.0 | - | - | 71.9/77.2 |

| GPT4V | - | 54.6 | 62.7 | 53.1 | 59.9/63.3 |

| Open-source | |||||

| VITA-1.5 | 8B | 45.0 | - | - | 56.1/58.7 |

| LLaVA-NeXT-Interleave 14B | 14B | 52.6 | 66.4 | 30.2 | - |

| LLaVA-OneVision-72B | 72B | 55.4 | 77.6 | - | 66.2/69.5 |

| MANTIS 8B | 8B | 49.1 | 59.5 | 34.8 | - |

| Qwen2-VL-7B | 8B | 53.2 | 69.6* | 67.6* | 63.3/69.0 |

| InternVL2.5-8B | 8B | 54.8 | 67.7 | 52.5 | 64.2/66.9 |

| MiniCPM-V 2.6 | 8B | 53.0 | 69.1 | 53.8 | 60.9/63.6 |

| MiniCPM-o 2.6 | 8B | 56.7 | 71.9 | 58.6 | 63.9/67.9 |

Click to view audio understanding and speech conversation results.

Audio Understanding

| Task | Size | ASR (zh) | ASR (en) | AST | Emotion | |||||

|---|---|---|---|---|---|---|---|---|---|---|

| Metric | CER↓ | WER↓ | BLEU↑ | ACC↑ | ||||||

| Dataset | AISHELL-1 | Fleurs zh | WenetSpeech test-net | LibriSpeech test-clean | GigaSpeech | TED-LIUM | CoVoST en2zh | CoVoST zh2en | MELD emotion | |

| Proprietary | ||||||||||

| GPT-4o-Realtime | - | 7.3* | 5.4* | 28.9* | 2.6* | 12.9* | 4.8* | 37.1* | 15.7* | 33.2* |

| Gemini 1.5 Pro | - | 4.5* | 5.9* | 14.3* | 2.9* | 10.6* | 3.0* | 47.3* | 22.6* | 48.4* |

| Open-Source | ||||||||||

| Qwen2-Audio-7B | 8B | - | 7.5 | - | 1.6 | - | - | 45.2 | 24.4 | 55.3 |

| Qwen2-Audio-7B-Instruct | 8B | 2.6* | 6.9* | 10.3* | 3.1* | 9.7* | 5.9* | 39.5* | 22.9* | 17.4* |

| VITA-1.5 | 8B | 2.16 | - | 8.4 | 3.4 | - | - | - | - | - |

| GLM-4-Voice-Base | 9B | 2.5 | - | - | 2.8 | - | - | - | - | |

| MiniCPM-o 2.6 | 8B | 1.6 | 4.4 | 6.9 | 1.7 | 8.7 | 3.0 | 48.2 | 27.2 | 52.4 |

Speech Generation

| Task | Size | SpeechQA | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Metric | ACC↑ | G-Eval (10 point)↑ | Semantic ELO score↑ | Acoustic ELO score↑ | Overall ELO score↑ | UTMOS↑ | ASR-WER↓ | |||

| Dataset | Speech Llama Q. | Speech Web Q. | Speech Trivia QA | Speech AlpacaEval | AudioArena | |||||

| Proprietary | ||||||||||

| GPT-4o-Realtime | 71.7 | 51.6 | 69.7 | 7.4 | 1157 | 1203 | 1200 | 4.2 | 2.3 | |

| Open-Source | ||||||||||

| GLM-4-Voice | 9B | 50.0 | 32.0 | 36.4 | 5.1 | 999 | 1147 | 1035 | 4.1 | 11.7 |

| Llama-Omni | 8B | 45.3 | 22.9 | 10.7 | 3.9 | 960 | 878 | 897 | 3.2 | 24.3 |

| VITA-1.5 | 8B | 46.7 | 28.1 | 23.3 | 2.0 | - | - | - | - | - |

| Moshi | 7B | 43.7 | 23.8 | 16.7 | 2.4 | 871 | 808 | 875 | 2.8 | 8.2 |

| Mini-Omni | 1B | 22.0 | 12.8 | 6.9 | 2.5 | 926 | 803 | 865 | 3.4 | 10.0 |

| MiniCPM-o 2.6 | 8B | 61.0 | 40.0 | 40.2 | 5.1 | 1088 | 1163 | 1131 | 4.2 | 9.8 |

End-to-end Voice Cloning

| Task | Voice cloning | |

|---|---|---|

| Metric | SIMO↑ | SIMO↑ |

| Dataset | Seed-TTS test-zh | Seed-TTS test-en |

| F5-TTS | 76 | 67 |

| CosyVoice | 75 | 64 |

| FireRedTTS | 63 | 46 |

| MiniCPM-o 2.6 | 57 | 47 |

Click to view multimodal live streaming results.

Multimodal Live Streaming: results on StreamingBench

| Model | Size | Real-Time Video Understanding | Omni-Source Understanding | Contextual Understanding | Overall | |||

|---|---|---|---|---|---|---|---|---|

| Proprietary | ||||||||

| Gemini 1.5 Pro | - | 77.4 | 67.8 | 51.1 | 70.3 | |||

| GPT-4o-202408 | - | 74.5 | 51.0 | 48.0 | 64.1 | |||

| Claude-3.5-Sonnet | - | 74.0 | 41.4 | 37.8 | 59.7 | |||

| Open-source | ||||||||

| VILA-1.5 | 8B | 61.5 | 37.5 | 26.7 | 49.5 | |||

| LongVA | 7B | 63.1 | 35.9 | 30.2 | 50.7 | |||

| LLaVA-Next-Video-34B | 34B | 69.8 | 41.7 | 34.3 | 56.7 | |||

| Qwen2-VL-7B | 8B | 71.2 | 40.7 | 33.1 | 57.0 | |||

| InternVL2-8B | 8B | 70.1 | 42.7 | 34.1 | 57.0 | |||

| VITA-1.5 | 8B | 70.9 | 40.8 | 35.8 | 57.4 | |||

| LLaVA-OneVision-7B | 8B | 74.3 | 40.8 | 31.0 | 58.4 | |||

| InternLM-XC2.5-OL-7B | 8B | 75.4 | 46.2 | 33.6 | 60.8 | |||

| MiniCPM-V 2.6 | 8B | 72.4 | 40.2 | 33.4 | 57.7 | |||

| MiniCPM-o 2.6 | 8B | 79.9 | 53.4 | 38.5 | 66.0 | |||

Examples

We deploy MiniCPM-o 2.6 on end devices. The demo video is the raw-speed recording on an iPad Pro and a Web demo.

Legacy Models

| Model | Introduction and Guidance |

|---|---|

| MiniCPM-V 4.0 | Document |

| MiniCPM-V 2.6 | Document |

| MiniCPM-Llama3-V 2.5 | Document |

| MiniCPM-V 2.0 | Document |

| MiniCPM-V 1.0 | Document |

| OmniLMM-12B | Document |

MiniCPM-V & o Cookbook

Discover comprehensive, ready-to-deploy solutions for the MiniCPM-V and MiniCPM-o model series in our structured cookbook, which empowers developers to rapidly implement multimodal AI applications with integrated vision, speech, and live-streaming capabilities. Key features include:

Easy Usage Documentation

Our comprehensive documentation website presents every recipe in a clear, well-organized manner. All features are displayed at a glance, making it easy for you to quickly find exactly what you need.

Broad User Spectrum

We support a wide range of users, from individuals to enterprises and researchers.

- Individuals: Enjoy effortless inference using Ollama and Llama.cpp with minimal setup.

- Enterprises: Achieve high-throughput, scalable performance with vLLM and SGLang.

- Researchers: Leverage advanced frameworks including Transformers, LLaMA-Factory, SWIFT, and Align-anything to enable flexible model development and cutting-edge experimentation.

Versatile Deployment Scenarios

Our ecosystem delivers optimal solution for a variety of hardware environments and deployment demands.

- Web demo: Launch interactive multimodal AI web demo with FastAPI.

- Quantized deployment: Maximize efficiency and minimize resource consumption using GGUF and BNB.

- End devices: Bring powerful AI experiences to iPhone and iPad, supporting offline and privacy-sensitive applications.

Chat with Our Demo on Gradio 🤗

We provide online and local demos powered by Hugging Face Gradio

Online Demo

Click here to try out the online demo of MiniCPM-o 2.6 | MiniCPM-V 2.6 | MiniCPM-Llama3-V 2.5 | MiniCPM-V 2.0.

Local WebUI Demo

You can easily build your own local WebUI demo using the following commands.

Please ensure that transformers==4.44.2 is installed, as other versions may have compatibility issues.

If you are using an older version of PyTorch, you might encounter this issue "weight_norm_fwd_first_dim_kernel" not implemented for 'BFloat16', Please add self.minicpmo_model.tts.float() during the model initialization.

For real-time voice/video call demo:

- launch model server:

pip install -r requirements_o2.6.txt

python web_demos/minicpm-o_2.6/model_server.py

- launch web server:

# Make sure Node and PNPM is installed.

sudo apt-get update

sudo apt-get install nodejs npm

npm install -g pnpm

cd web_demos/minicpm-o_2.6/web_server

# create ssl cert for https, https is required to request camera and microphone permissions.

bash ./make_ssl_cert.sh # output key.pem and cert.pem

pnpm install # install requirements

pnpm run dev # start server

Open https://localhost:8088/ in browser and enjoy the real-time voice/video call.

For chatbot demo:

pip install -r requirements_o2.6.txt

python web_demos/minicpm-o_2.6/chatbot_web_demo_o2.6.py

Open http://localhost:8000/ in browser and enjoy the vision mode chatbot.

Inference

Model Zoo

| Model | Device | Memory | Description | Download |

|---|---|---|---|---|

| MiniCPM-V 4.5 | GPU | 18 GB | The latest version, strong end-side multimodal performance for single image, multi-image and video understanding. | 🤗  |

| MiniCPM-V 4.5 gguf | CPU | 8 GB | The gguf version, lower memory usage and faster inference. | 🤗  |

| MiniCPM-V 4.5 int4 | GPU | 9 GB | The int4 quantized version, lower GPU memory usage. | 🤗  |

| MiniCPM-V 4.5 AWQ | GPU | 9 GB | The int4 quantized version, lower GPU memory usage. | 🤗  |

| MiniCPM-o 2.6 | GPU | 18 GB | The latest version, achieving GPT-4o level performance for vision, speech and multimodal live streaming on end-side devices. | 🤗  |

| MiniCPM-o 2.6 gguf | CPU | 8 GB | The gguf version, lower memory usage and faster inference. | 🤗  |

| MiniCPM-o 2.6 int4 | GPU | 9 GB | The int4 quantized version, lower GPU memory usage. | 🤗  |

Multi-turn Conversation

If you wish to enable long-thinking mode, provide the argument enable_thinking=True to the chat function.

pip install -r requirements_o2.6.txt

Please refer to the following codes to run.

import torch

from PIL import Image

from transformers import AutoModel, AutoTokenizer

torch.manual_seed(100)

model = AutoModel.from_pretrained('openbmb/MiniCPM-V-4_5', trust_remote_code=True, # or openbmb/MiniCPM-o-2_6

attn_implementation='sdpa', torch_dtype=torch.bfloat16) # sdpa or flash_attention_2, no eager

model = model.eval().cuda()

tokenizer = AutoTokenizer.from_pretrained('openbmb/MiniCPM-V-4_5', trust_remote_code=True) # or openbmb/MiniCPM-o-2_6

image = Image.open('./assets/minicpmo2_6/show_demo.jpg').convert('RGB')

enable_thinking=False # If `enable_thinking=True`, the long-thinking mode is enabled.

# First round chat

question = "What is the landform in the picture?"

msgs = [{'role': 'user', 'content': [image, question]}]

answer = model.chat(

msgs=msgs,

tokenizer=tokenizer,

enable_thinking=enable_thinking

)

print(answer)

# Second round chat, pass history context of multi-turn conversation

msgs.append({"role": "assistant", "content": [answer]})

msgs.append({"role": "user", "content": ["What should I pay attention to when traveling here?"]})

answer = model.chat(

msgs=msgs,

tokenizer=tokenizer

)

print(answer)

You will get the following output:

# round1

The landform in the picture is karst topography. Karst landscapes are characterized by distinctive, jagged limestone hills or mountains with steep, irregular peaks and deep valleys—exactly what you see here These unique formations result from the dissolution of soluble rocks like limestone over millions of years through water erosion.

This scene closely resembles the famous karst landscape of Guilin and Yangshuo in China’s Guangxi Province. The area features dramatic, pointed limestone peaks rising dramatically above serene rivers and lush green forests, creating a breathtaking and iconic natural beauty that attracts millions of visitors each year for its picturesque views.

# round2

When traveling to a karst landscape like this, here are some important tips:

1. Wear comfortable shoes: The terrain can be uneven and hilly.

2. Bring water and snacks for energy during hikes or boat rides.

3. Protect yourself from the sun with sunscreen, hats, and sunglasses—especially since you’ll likely spend time outdoors exploring scenic spots.

4. Respect local customs and nature regulations by not littering or disturbing wildlife.

By following these guidelines, you'll have a safe and enjoyable trip while appreciating the stunning natural beauty of places such as Guilin’s karst mountains.

Chat with Multiple Images

Click to view Python code running MiniCPM-V-4_5 with multiple images input.

import torch

from PIL import Image

from transformers import AutoModel, AutoTokenizer

model = AutoModel.from_pretrained('openbmb/MiniCPM-V-4_5', trust_remote_code=True, # or openbmb/MiniCPM-o-2_6

attn_implementation='sdpa', torch_dtype=torch.bfloat16) # sdpa or flash_attention_2, no eager

model = model.eval().cuda()

tokenizer = AutoTokenizer.from_pretrained('openbmb/MiniCPM-V-4_5', trust_remote_code=True) # or openbmb/MiniCPM-o-2_6

image1 = Image.open('image1.jpg').convert('RGB')

image2 = Image.open('image2.jpg').convert('RGB')

question = 'Compare image 1 and image 2, tell me about the differences between image 1 and image 2.'

msgs = [{'role': 'user', 'content': [image1, image2, question]}]

answer = model.chat(

msgs=msgs,

tokenizer=tokenizer

)

print(answer)

In-context Few-shot Learning

Click to view Python code running MiniCPM-V-4_5 with few-shot input.

import torch

from PIL import Image

from transformers import AutoModel, AutoTokenizer

model = AutoModel.from_pretrained('openbmb/MiniCPM-V-4_5', trust_remote_code=True, # or openbmb/MiniCPM-o-2_6

attn_implementation='sdpa', torch_dtype=torch.bfloat16) # sdpa or flash_attention_2, no eager

model = model.eval().cuda()

tokenizer = AutoTokenizer.from_pretrained('openbmb/MiniCPM-V-4_5', trust_remote_code=True) # or openbmb/MiniCPM-o-2_6

question = "production date"

image1 = Image.open('example1.jpg').convert('RGB')

answer1 = "2023.08.04"

image2 = Image.open('example2.jpg').convert('RGB')

answer2 = "2007.04.24"

image_test = Image.open('test.jpg').convert('RGB')

msgs = [

{'role': 'user', 'content': [image1, question]}, {'role': 'assistant', 'content': [answer1]},

{'role': 'user', 'content': [image2, question]}, {'role': 'assistant', 'content': [answer2]},

{'role': 'user', 'content': [image_test, question]}

]

answer = model.chat(

msgs=msgs,

tokenizer=tokenizer

)

print(answer)

Chat with Video

Click to view Python code running MiniCPM-V-4_5 by with video input and 3D-Resampler.

## The 3d-resampler compresses multiple frames into 64 tokens by introducing temporal_ids.

# To achieve this, you need to organize your video data into two corresponding sequences:

# frames: List[Image]

# temporal_ids: List[List[Int]].

import torch

from PIL import Image

from transformers import AutoModel, AutoTokenizer

from decord import VideoReader, cpu # pip install decord

from scipy.spatial import cKDTree

import numpy as np

import math

model = AutoModel.from_pretrained('openbmb/MiniCPM-V-4_5', trust_remote_code=True, # or openbmb/MiniCPM-o-2_6

attn_implementation='sdpa', torch_dtype=torch.bfloat16) # sdpa or flash_attention_2, no eager

model = model.eval().cuda()

tokenizer = AutoTokenizer.from_pretrained('openbmb/MiniCPM-V-4_5', trust_remote_code=True) # or openbmb/MiniCPM-o-2_6

MAX_NUM_FRAMES=180 # Indicates the maximum number of frames received after the videos are packed. The actual maximum number of valid frames is MAX_NUM_FRAMES * MAX_NUM_PACKING.

MAX_NUM_PACKING=3 # indicates the maximum packing number of video frames. valid range: 1-6

TIME_SCALE = 0.1

def map_to_nearest_scale(values, scale):

tree = cKDTree(np.asarray(scale)[:, None])

_, indices = tree.query(np.asarray(values)[:, None])

return np.asarray(scale)[indices]

def group_array(arr, size):

return [arr[i:i+size] for i in range(0, len(arr), size)]

def encode_video(video_path, choose_fps=3, force_packing=None):

def uniform_sample(l, n):

gap = len(l) / n

idxs = [int(i * gap + gap / 2) for i in range(n)]

return [l[i] for i in idxs]

vr = VideoReader(video_path, ctx=cpu(0))

fps = vr.get_avg_fps()

video_duration = len(vr) / fps

if choose_fps * int(video_duration) <= MAX_NUM_FRAMES:

packing_nums = 1

choose_frames = round(min(choose_fps, round(fps)) * min(MAX_NUM_FRAMES, video_duration))

else:

packing_nums = math.ceil(video_duration * choose_fps / MAX_NUM_FRAMES)

if packing_nums <= MAX_NUM_PACKING:

choose_frames = round(video_duration * choose_fps)

else:

choose_frames = round(MAX_NUM_FRAMES * MAX_NUM_PACKING)

packing_nums = MAX_NUM_PACKING

frame_idx = [i for i in range(0, len(vr))]

frame_idx = np.array(uniform_sample(frame_idx, choose_frames))

if force_packing:

packing_nums = min(force_packing, MAX_NUM_PACKING)

print(video_path, ' duration:', video_duration)

print(f'get video frames={len(frame_idx)}, packing_nums={packing_nums}')

frames = vr.get_batch(frame_idx).asnumpy()

frame_idx_ts = frame_idx / fps

scale = np.arange(0, video_duration, TIME_SCALE)

frame_ts_id = map_to_nearest_scale(frame_idx_ts, scale) / TIME_SCALE

frame_ts_id = frame_ts_id.astype(np.int32)

assert len(frames) == len(frame_ts_id)

frames = [Image.fromarray(v.astype('uint8')).convert('RGB') for v in frames]

frame_ts_id_group = group_array(frame_ts_id, packing_nums)

return frames, frame_ts_id_group

video_path="video_test.mp4"

fps = 5 # fps for video

force_packing = None # You can set force_packing to ensure that 3D packing is forcibly enabled; otherwise, encode_video will dynamically set the packing quantity based on the duration.

frames, frame_ts_id_group = encode_video(video_path, fps, force_packing=force_packing)

question = "Describe the video"

msgs = [

{'role': 'user', 'content': frames + [question]},

]

answer = model.chat(

msgs=msgs,

tokenizer=tokenizer,

use_image_id=False,

max_slice_nums=1,

temporal_ids=frame_ts_id_group

)

print(answer)

Speech and Audio Mode

Model initialization

import torch

import librosa

from transformers import AutoModel, AutoTokenizer

model = AutoModel.from_pretrained('openbmb/MiniCPM-o-2_6', trust_remote_code=True,

attn_implementation='sdpa', torch_dtype=torch.bfloat16) # sdpa or flash_attention_2, no eager

model = model.eval().cuda()

tokenizer = AutoTokenizer.from_pretrained('openbmb/MiniCPM-o-2_6', trust_remote_code=True)

model.init_tts()

model.tts.float()

Mimick

Mimick task reflects a model's end-to-end speech modeling capability. The model takes audio input, and outputs an ASR transcription and subsequently reconstructs the original audio with high similarity. The higher the similarity between the reconstructed audio and the original audio, the stronger the model's foundational capability in end-to-end speech modeling.

mimick_prompt = "Please repeat each user's speech, including voice style and speech content."

audio_input, _ = librosa.load('./assets/input_examples/Trump_WEF_2018_10s.mp3', sr=16000, mono=True) # load the audio to be mimicked

# `./assets/input_examples/fast-pace.wav`,

# `./assets/input_examples/chi-english-1.wav`

# `./assets/input_examples/exciting-emotion.wav`

# for different aspects of speech-centric features.

msgs = [{'role': 'user', 'content': [mimick_prompt, audio_input]}]

res = model.chat(

msgs=msgs,

tokenizer=tokenizer,

sampling=True,

max_new_tokens=128,

use_tts_template=True,

temperature=0.3,

generate_audio=True,

output_audio_path='output_mimick.wav', # save the tts result to output_audio_path

)

General Speech Conversation with Configurable Voices

A general usage scenario of MiniCPM-o-2.6 is role-playing a specific character based on the audio prompt. It will mimic the voice of the character to some extent and act like the character in text, including language style. In this mode, MiniCPM-o-2.6 sounds more natural and human-like. Self-defined audio prompts can be used to customize the voice of the character in an end-to-end manner.

ref_audio, _ = librosa.load('./assets/input_examples/icl_20.wav', sr=16000, mono=True) # load the reference audio

sys_prompt = model.get_sys_prompt(ref_audio=ref_audio, mode='audio_roleplay', language='en')

# round one

user_question = {'role': 'user', 'content': [librosa.load('xxx.wav', sr=16000, mono=True)[0]]}

msgs = [sys_prompt, user_question]

res = model.chat(

msgs=msgs,

tokenizer=tokenizer,

sampling=True,

max_new_tokens=128,

use_tts_template=True,

generate_audio=True,

temperature=0.3,

output_audio_path='result_roleplay_round_1.wav',

)

# round two

history = msgs.append({'role': 'assistant', 'content': res})

user_question = {'role': 'user', 'content': [librosa.load('xxx.wav', sr=16000, mono=True)[0]]}

msgs = history.append(user_question)

res = model.chat(

msgs=msgs,

tokenizer=tokenizer,

sampling=True,

max_new_tokens=128,

use_tts_template=True,

generate_audio=True,

temperature=0.3,

output_audio_path='result_roleplay_round_2.wav',

)

print(res)

Speech Conversation as an AI Assistant

An enhanced feature of MiniCPM-o-2.6 is to act as an AI assistant, but only with limited choice of voices. In this mode, MiniCPM-o-2.6 is less human-like and more like a voice assistant. In this mode, the model is more instruction-following. For demo, you are suggested to use assistant_female_voice, assistant_male_voice, and assistant_default_female_voice. Other voices may work but not as stable as the default voices.

Please note that, assistant_female_voice and assistant_male_voice are more stable but sounds like robots, while assistant_default_female_voice is more human-alike but not stable, its voice often changes in multiple turns. We suggest you to try stable voices assistant_female_voice and assistant_male_voice.

ref_audio, _ = librosa.load('./assets/input_examples/assistant_female_voice.wav', sr=16000, mono=True) # or use `./assets/input_examples/assistant_male_voice.wav`

sys_prompt = model.get_sys_prompt(ref_audio=ref_audio, mode='audio_assistant', language='en')

user_question = {'role': 'user', 'content': [librosa.load('xxx.wav', sr=16000, mono=True)[0]]} # load the user's audio question

# round one

msgs = [sys_prompt, user_question]

res = model.chat(

msgs=msgs,

tokenizer=tokenizer,

sampling=True,

max_new_tokens=128,

use_tts_template=True,

generate_audio=True,

temperature=0.3,

output_audio_path='result_assistant_round_1.wav',

)

# round two

history = msgs.append({'role': 'assistant', 'content': res})

user_question = {'role': 'user', 'content': [librosa.load('xxx.wav', sr=16000, mono=True)[0]]}

msgs = history.append(user_question)

res = model.chat(

msgs=msgs,

tokenizer=tokenizer,

sampling=True,

max_new_tokens=128,

use_tts_template=True,

generate_audio=True,

temperature=0.3,

output_audio_path='result_assistant_round_2.wav',

)

print(res)

Instruction-to-Speech

MiniCPM-o-2.6 can also do Instruction-to-Speech, aka Voice Creation. You can describe a voice in detail, and the model will generate a voice that matches the description. For more Instruction-to-Speech sample instructions, you can refer to https://voxinstruct.github.io/VoxInstruct/.

instruction = 'Speak like a male charming superstar, radiating confidence and style in every word.'

msgs = [{'role': 'user', 'content': [instruction]}]

res = model.chat(

msgs=msgs,

tokenizer=tokenizer,

sampling=True,

max_new_tokens=128,

use_tts_template=True,

generate_audio=True,

temperature=0.3,

output_audio_path='result_voice_creation.wav',

)

Voice Cloning

MiniCPM-o-2.6 can also do zero-shot text-to-speech, aka Voice Cloning. With this mode, model will act like a TTS model.

ref_audio, _ = librosa.load('./assets/input_examples/icl_20.wav', sr=16000, mono=True) # load the reference audio

sys_prompt = model.get_sys_prompt(ref_audio=ref_audio, mode='voice_cloning', language='en')

text_prompt = f"Please read the text below."

user_question = {'role': 'user', 'content': [text_prompt, "content that you want to read"]}

msgs = [sys_prompt, user_question]

res = model.chat(

msgs=msgs,

tokenizer=tokenizer,

sampling=True,

max_new_tokens=128,

use_tts_template=True,

generate_audio=True,

temperature=0.3,

output_audio_path='result_voice_cloning.wav',

)

Addressing Various Audio Understanding Tasks

MiniCPM-o-2.6 can also be used to address various audio understanding tasks, such as ASR, speaker analysis, general audio captioning, and sound scene tagging.

For audio-to-text tasks, you can use the following prompts:

- ASR with ZH(same as AST en2zh):

请仔细听这段音频片段,并将其内容逐字记录。 - ASR with EN(same as AST zh2en):

Please listen to the audio snippet carefully and transcribe the content. - Speaker Analysis:

Based on the speaker's content, speculate on their gender, condition, age range, and health status. - General Audio Caption:

Summarize the main content of the audio. - General Sound Scene Tagging:

Utilize one keyword to convey the audio's content or the associated scene.

task_prompt = "Please listen to the audio snippet carefully and transcribe the content." + "\n" # can change to other prompts.

audio_input, _ = librosa.load('./assets/input_examples/audio_understanding.mp3', sr=16000, mono=True) # load the audio to be captioned

msgs = [{'role': 'user', 'content': [task_prompt, audio_input]}]

res = model.chat(

msgs=msgs,

tokenizer=tokenizer,

sampling=True,

max_new_tokens=128,

use_tts_template=True,

generate_audio=True,

temperature=0.3,

output_audio_path='result_audio_understanding.wav',

)

print(res)

Multimodal Live Streaming

Click to view Python code running MiniCPM-o 2.6 with chat inference.

import math

import numpy as np

from PIL import Image

from moviepy.editor import VideoFileClip

import tempfile

import librosa

import soundfile as sf

import torch

from transformers import AutoModel, AutoTokenizer

def get_video_chunk_content(video_path, flatten=True):

video = VideoFileClip(video_path)

print('video_duration:', video.duration)

with tempfile.NamedTemporaryFile(suffix=".wav", delete=True) as temp_audio_file:

temp_audio_file_path = temp_audio_file.name

video.audio.write_audiofile(temp_audio_file_path, codec="pcm_s16le", fps=16000)

audio_np, sr = librosa.load(temp_audio_file_path, sr=16000, mono=True)

num_units = math.ceil(video.duration)

# 1 frame + 1s audio chunk

contents= []

for i in range(num_units):

frame = video.get_frame(i+1)

image = Image.fromarray((frame).astype(np.uint8))

audio = audio_np[sr*i:sr*(i+1)]

if flatten:

contents.extend(["<unit>", image, audio])

else:

contents.append(["<unit>", image, audio])

return contents

model = AutoModel.from_pretrained('openbmb/MiniCPM-o-2_6', trust_remote_code=True,

attn_implementation='sdpa', torch_dtype=torch.bfloat16)

model = model.eval().cuda()

tokenizer = AutoTokenizer.from_pretrained('openbmb/MiniCPM-o-2_6', trust_remote_code=True)

model.init_tts()

# If you are using an older version of PyTorch, you might encounter this issue "weight_norm_fwd_first_dim_kernel" not implemented for 'BFloat16', Please convert the TTS to float32 type.

# model.tts.float()

# https://huggingface.co/openbmb/MiniCPM-o-2_6/blob/main/assets/Skiing.mp4

video_path="assets/Skiing.mp4"

sys_msg = model.get_sys_prompt(mode='omni', language='en')

# if use voice clone prompt, please set ref_audio

# ref_audio_path = '/path/to/ref_audio'

# ref_audio, _ = librosa.load(ref_audio_path, sr=16000, mono=True)

# sys_msg = model.get_sys_prompt(ref_audio=ref_audio, mode='omni', language='en')

contents = get_video_chunk_content(video_path)

msg = {"role":"user", "content": contents}

msgs = [sys_msg, msg]

# please set generate_audio=True and output_audio_path to save the tts result

generate_audio = True

output_audio_path = 'output.wav'

res = model.chat(

msgs=msgs,

tokenizer=tokenizer,

sampling=True,

temperature=0.5,

max_new_tokens=4096,

omni_input=True, # please set omni_input=True when omni inference

use_tts_template=True,

generate_audio=generate_audio,

output_audio_path=output_audio_path,

max_slice_nums=1,

use_image_id=False,

return_dict=True

)

print(res)

Click to view Python code running MiniCPM-o 2.6 with streaming inference.

Note: The streaming inference has a slight performance degradation because the audio encoding is not global.

# a new conversation need reset session first, it will reset the kv-cache

model.reset_session()

contents = get_video_chunk_content(video_path, flatten=False)

session_id = '123'

generate_audio = True

# 1. prefill system prompt

res = model.streaming_prefill(

session_id=session_id,

msgs=[sys_msg],

tokenizer=tokenizer

)

# 2. prefill video/audio chunks

for content in contents:

msgs = [{"role":"user", "content": content}]

res = model.streaming_prefill(

session_id=session_id,

msgs=msgs,

tokenizer=tokenizer

)

# 3. generate

res = model.streaming_generate(

session_id=session_id,

tokenizer=tokenizer,

temperature=0.5,

generate_audio=generate_audio

)

audios = []

text = ""

if generate_audio:

for r in res:

audio_wav = r.audio_wav

sampling_rate = r.sampling_rate

txt = r.text

audios.append(audio_wav)

text += txt

res = np.concatenate(audios)

sf.write("output.wav", res, samplerate=sampling_rate)

print("text:", text)

print("audio saved to output.wav")

else:

for r in res:

text += r['text']

print("text:", text)

Inference on Multiple GPUs

You can run MiniCPM-Llama3-V 2.5 on multiple low VRAM GPUs (12 GB or 16 GB) by distributing the model's layers across multiple GPUs. Please refer to this tutorial for detailed instructions on how to load the model and inference using multiple low VRAM GPUs.

Inference on Mac

Click to view an example, to run MiniCPM-Llama3-V 2.5 on 💻 Mac with MPS (Apple silicon or AMD GPUs).

# test.py Need more than 16GB memory.

import torch

from PIL import Image

from transformers import AutoModel, AutoTokenizer

model = AutoModel.from_pretrained('openbmb/MiniCPM-Llama3-V-2_5', trust_remote_code=True, low_cpu_mem_usage=True)

model = model.to(device='mps')

tokenizer = AutoTokenizer.from_pretrained('openbmb/MiniCPM-Llama3-V-2_5', trust_remote_code=True)

model.eval()

image = Image.open('./assets/hk_OCR.jpg').convert('RGB')

question = 'Where is this photo taken?'

msgs = [{'role': 'user', 'content': question}]

answer, context, _ = model.chat(

image=image,

msgs=msgs,

context=None,

tokenizer=tokenizer,

sampling=True

)

print(answer)

Run with command:

PYTORCH_ENABLE_MPS_FALLBACK=1 python test.py

Efficient Inference with llama.cpp, Ollama, vLLM

See our fork of llama.cpp for more detail. This implementation supports smooth inference of 16~18 token/s on iPad (test environment:iPad Pro + M4).

See our fork of Ollama for more detail. This implementation supports smooth inference of 16~18 token/s on iPad (test environment:iPad Pro + M4).

vLLM now officially supports MiniCPM-V 2.6, MiniCPM-Llama3-V 2.5 and MiniCPM-V 2.0. And you can use our fork to run MiniCPM-o 2.6 for now. Click to see.

- Install vLLM(>=0.7.1):

pip install vllm

- Run Example:

Fine-tuning

Simple Fine-tuning

We support simple fine-tuning with Hugging Face for MiniCPM-o 2.6, MiniCPM-V 2.6, MiniCPM-Llama3-V 2.5 and MiniCPM-V 2.0.

With Align-Anything

We support fine-tuning MiniCPM-o 2.6 by PKU-Alignment Team (both vision and audio, SFT and DPO) with the Align-Anything framework. Align-Anything is a scalable framework that aims to align any-modality large models with human intentions, open-sourcing the datasets, models and benchmarks. Benefiting from its concise and modular design, it supports 30+ open-source benchmarks, 40+ models and algorithms including SFT, SimPO, RLHF, etc. It also provides 30+ directly runnable scripts, making it suitable for beginners to quickly get started.

Best Practices: MiniCPM-o 2.6.

With LLaMA-Factory

We support fine-tuning MiniCPM-o 2.6 and MiniCPM-V 2.6 with the LLaMA-Factory framework. LLaMA-Factory provides a solution for flexibly customizing the fine-tuning (Lora/Full/Qlora) of 200+ LLMs without the need for coding through the built-in web UI LLaMABoard. It supports various training methods like sft/ppo/dpo/kto and advanced algorithms like Galore/BAdam/LLaMA-Pro/Pissa/LongLoRA.

Best Practices: MiniCPM-o 2.6 | MiniCPM-V 2.6.

With the SWIFT Framework

We now support MiniCPM-V series fine-tuning with the SWIFT framework. SWIFT supports training, inference, evaluation and deployment of nearly 200 LLMs and MLLMs . It supports the lightweight training solutions provided by PEFT and a complete Adapters Library including techniques such as NEFTune, LoRA+ and LLaMA-PRO.

Best Practices:MiniCPM-V 1.0, MiniCPM-V 2.0, MiniCPM-V 2.6.

Awesome work using MiniCPM-V & MiniCPM-o

- text-extract-api: Document extraction API using OCRs and Ollama supported models

- comfyui_LLM_party: Build LLM workflows and integrate into existing image workflows

- Ollama-OCR: OCR package uses vlms through Ollama to extract text from images and PDF

- comfyui-mixlab-nodes: ComfyUI node suite supports Workflow-to-APP、GPT&3D and more

- OpenAvatarChat: Interactive digital human conversation implementation on single PC

- pensieve: A privacy-focused passive recording project by recording screen content

- paperless-gpt: Use LLMs to handle paperless-ngx, AI-powered titles, tags and OCR

- Neuro: A recreation of Neuro-Sama, but running on local models on consumer hardware

FAQs

Click here to view the FAQs

Limitations

As an experimental trial, we find MiniCPM-o 2.6 has notable limitations worth further investigation and improvement.

- Unstable speech output. The speech generation can be flawed with noisy backgrounds and unmeaningful sounds.

- Repeated response. The model tends to repeat its response when encountering similar consecutive user queries.

- High-latency on Web Demo. Users may experience unusual high-latency when using web demo hosted on overseas servers. We recommend deploying the demo locally or with good network connections.

Model License

-

This repository is released under the Apache-2.0 License.

-

The usage of MiniCPM-o/V model weights must strictly follow MiniCPM Model License.md.

-

The models and weights of MiniCPM are completely free for academic research. after filling out a "questionnaire" for registration, are also available for free commercial use.

Statement

As MLLMs, MiniCPM-o/V models generate content by learning a large number of multimodal corpora, but they cannot comprehend, express personal opinions, or make value judgements. Anything generated by MiniCPM-o/V models does not represent the views and positions of the model developers

We will not be liable for any problems arising from the use of MiniCPM-o/V models, including but not limited to data security issues, risk of public opinion, or any risks and problems arising from the misdirection, misuse, dissemination, or misuse of the model.

Institutions

This project is developed by the following institutions:

🌟 Star History

Key Techniques and Other Multimodal Projects

👏 Welcome to explore key techniques of MiniCPM-o/V and other multimodal projects of our team:

VisCPM | RLPR | RLHF-V | LLaVA-UHD | RLAIF-V

Citation

If you find our model/code/paper helpful, please consider citing our papers 📝 and staring us ⭐️!

@article{yao2024minicpm,

title={MiniCPM-V: A GPT-4V Level MLLM on Your Phone},

author={Yao, Yuan and Yu, Tianyu and Zhang, Ao and Wang, Chongyi and Cui, Junbo and Zhu, Hongji and Cai, Tianchi and Li, Haoyu and Zhao, Weilin and He, Zhihui and others},

journal={arXiv preprint arXiv:2408.01800},

year={2024}

}

Discover Repositories

Search across tracked repositories by name or description