Loading star history...

Health Score

5.6

Weekly Growth

+0

+0.0% this week

Contributors

1

Total contributors

Open Issues

52

Generated Insights

About DeepResearch

Introduction

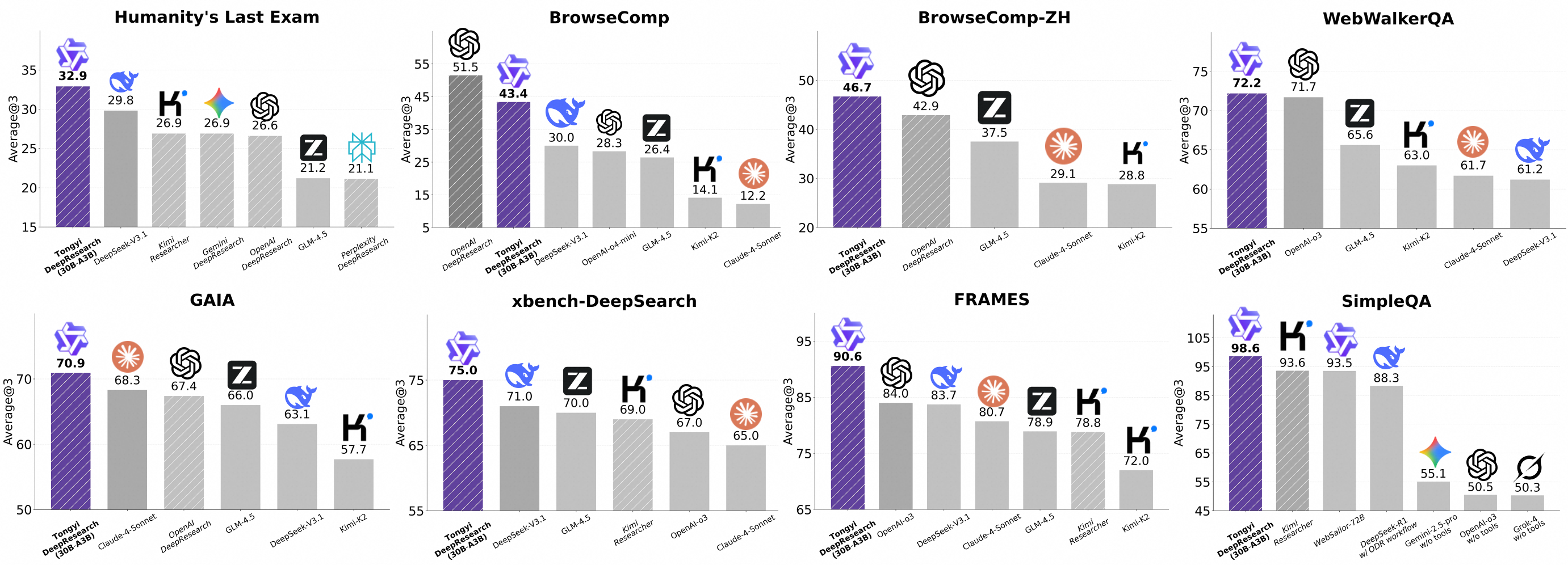

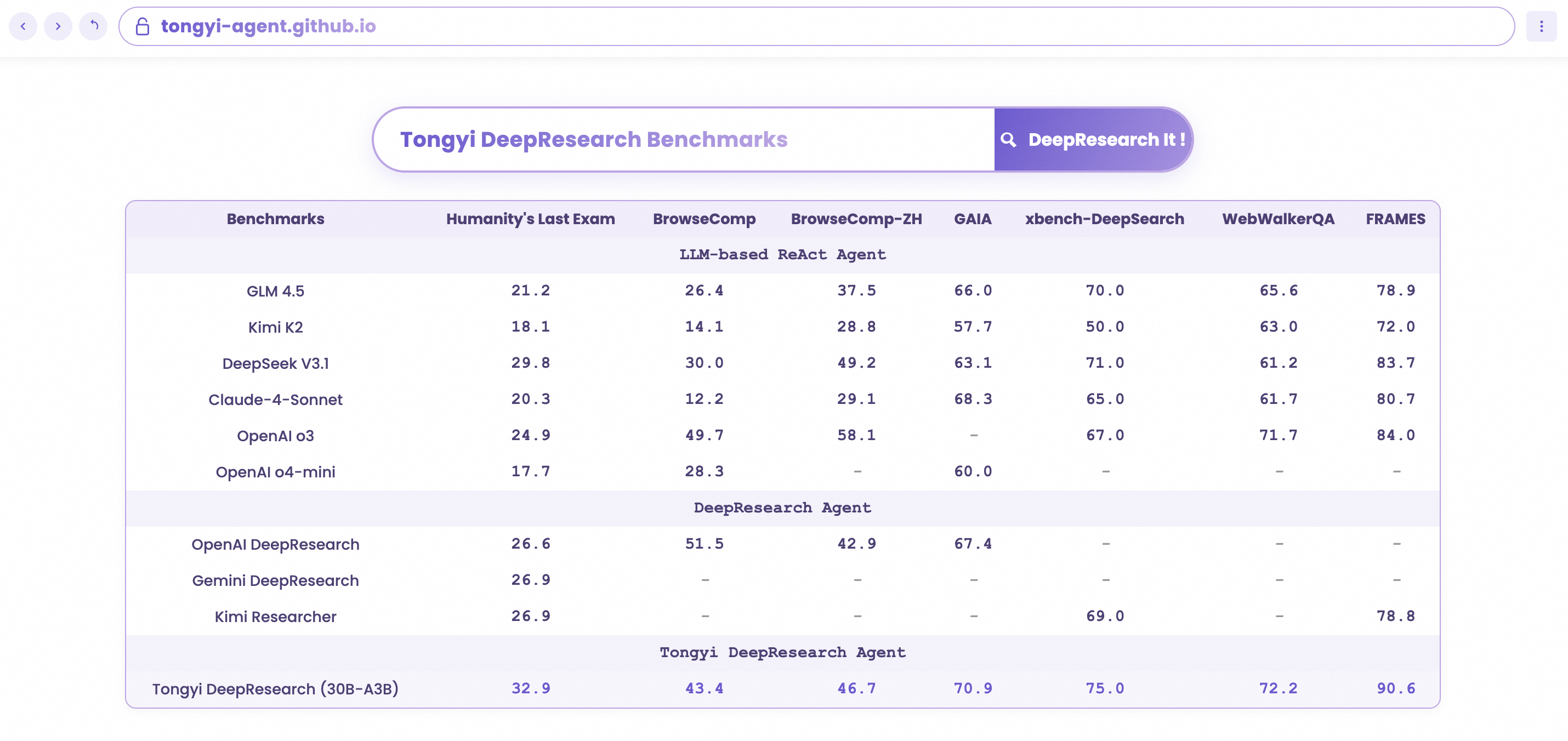

We present  Tongyi DeepResearch, an agentic large language model featuring 30.5 billion total parameters, with only 3.3 billion activated per token. Developed by Tongyi Lab, the model is specifically designed for long-horizon, deep information-seeking tasks. Tongyi DeepResearch demonstrates state-of-the-art performance across a range of agentic search benchmarks, including Humanity's Last Exam, BrowserComp, BrowserComp-ZH, WebWalkerQA,xbench-DeepSearch, FRAMES and SimpleQA.

Tongyi DeepResearch, an agentic large language model featuring 30.5 billion total parameters, with only 3.3 billion activated per token. Developed by Tongyi Lab, the model is specifically designed for long-horizon, deep information-seeking tasks. Tongyi DeepResearch demonstrates state-of-the-art performance across a range of agentic search benchmarks, including Humanity's Last Exam, BrowserComp, BrowserComp-ZH, WebWalkerQA,xbench-DeepSearch, FRAMES and SimpleQA.

Tongyi DeepResearch builds upon our previous work on the

WebAgent project.

More details can be found in our 📰 Tech Blog.

Features

- ⚙️ Fully automated synthetic data generation pipeline: We design a highly scalable data synthesis pipeline, which is fully automatic and empowers agentic pre-training, supervised fine-tuning, and reinforcement learning.

- 🔄 Large-scale continual pre-training on agentic data: Leveraging diverse, high-quality agentic interaction data to extend model capabilities, maintain freshness, and strengthen reasoning performance.

- 🔁 End-to-end reinforcement learning: We employ a strictly on-policy RL approach based on a customized Group Relative Policy Optimization framework, with token-level policy gradients, leave-one-out advantage estimation, and selective filtering of negative samples to stabilize training in a non‑stationary environment.

- 🤖 Agent Inference Paradigm Compatibility: At inference, Tongyi DeepResearch is compatible with two inference paradigms: ReAct, for rigorously evaluating the model's core intrinsic abilities, and an IterResearch-based 'Heavy' mode, which uses a test-time scaling strategy to unlock the model's maximum performance ceiling.

Model Download

You can directly download the model by following the links below.

| Model | Download Links | Model Size | Context Length |

|---|---|---|---|

| Tongyi-DeepResearch-30B-A3B | 🤗 HuggingFace 🤖 ModelScope | 30B-A3B | 128K |

News

[2025/09/17]🔥 We have released Tongyi-DeepResearch-30B-A3B.

Deep Research Benchmark Results

Quick Start

This guide provides instructions for setting up the environment and running inference scripts located in the inference folder.

1. Environment Setup

- Recommended Python version: 3.10.0 (using other versions may cause dependency issues).

- It is strongly advised to create an isolated environment using

condaorvirtualenv.

# Example with Conda

conda create -n react_infer_env python=3.10.0

conda activate react_infer_env

2. Installation

Install the required dependencies:

pip install -r requirements.txt

3. Prepare Evaluation Data

- Create a folder named

eval_data/in the project root. - Place your QA file in JSONL format inside this directory, e.g.

eval_data/example.jsonl. - Each line must be a JSON object that includes both of the following keys:

{"question": "...","answer": "..."} - A sample file is provided in the

eval_datafolder for reference. - If you plan to use the file parser tool, prepend the file name to the

questionfield and place the referenced file inside theeval_data/file_corpus/directory.

4. Configure the Inference Script

- Open

run_react_infer.shand modify the following variables as instructed in the comments:MODEL_PATH- path to the local or remote model weights.DATASET- path to the evaluation set, e.g.example.OUTPUT_PATH- path for saving the prediction results, e.g../outputs.

- Depending on the tools you enable (retrieval, calculator, web search, etc.), provide the required

API_KEY,BASE_URL, or other credentials. Each key is explained inline in the bash script.

5. Run the Inference Script

bash run_react_infer.sh

With these steps, you can fully prepare the environment, configure the dataset, and run the model. For more details, consult the inline comments in each script or open an issue.

Benchmark Evaluation

We provide benchmark evaluation scripts for various datasets. Please refer to the evaluation scripts directory for more details.

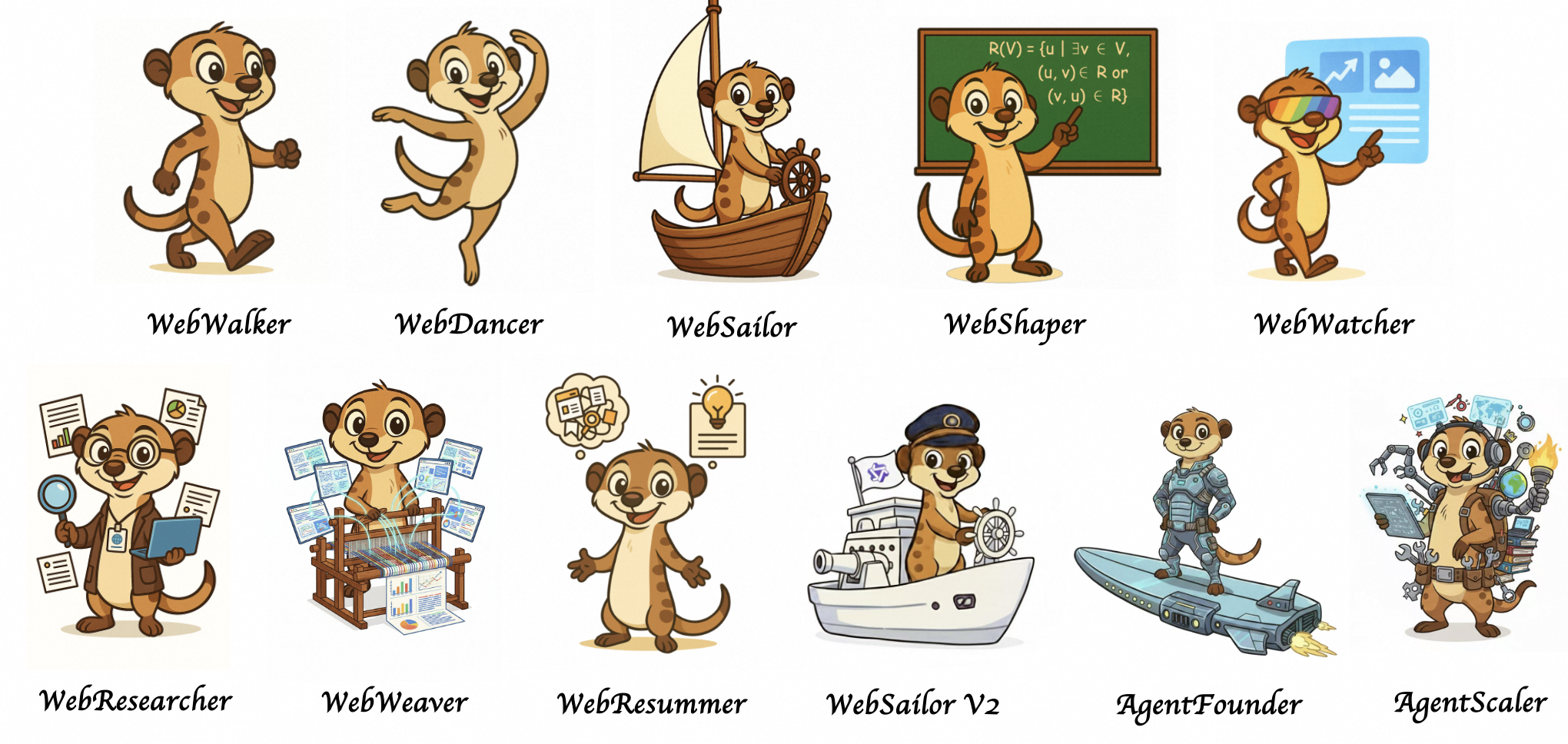

Deep Research Agent Family

Tongyi DeepResearch also has an extensive deep research agent family. You can find more information in the following paper:

[1] WebWalker: Benchmarking LLMs in Web Traversal

[2] WebDancer: Towards Autonomous Information Seeking Agency

[3] WebSailor: Navigating Super-human Reasoning for Web Agent

[4] WebShaper: Agentically Data Synthesizing via Information-Seeking Formalization

[5] WebWatcher: Breaking New Frontier of Vision-Language Deep Research Agent

[6] WebResearcher: Unleashing unbounded reasoning capability in Long-Horizon Agents

[7] ReSum: Unlocking Long-Horizon Search Intelligence via Context Summarization

[8] WebWeaver: Structuring Web-Scale Evidence with Dynamic Outlines for Open-Ended Deep Research

[9] WebSailor-V2: Bridging the Chasm to Proprietary Agents via Synthetic Data and Scalable Reinforcement Learning

[10] Scaling Agents via Continual Pre-training

[11] Towards General Agentic Intelligence via Environment Scaling

🌟 Misc

🚩 Talent Recruitment

🔥🔥🔥 We are hiring! Research intern positions are open (based in Hangzhou、Beijing��、Shanghai)

📚 Research Area:Web Agent, Search Agent, Agent RL, MultiAgent RL, Agentic RAG

☎️ Contact:[email protected]

Contact Information

For communications, please contact Yong Jiang ([email protected]).

Citation

@misc{tongyidr,

author={Tongyi DeepResearch Team},

title={Tongyi-DeepResearch},

year={2025},

howpublished={\url{https://github.com/Alibaba-NLP/DeepResearch}}

}

Discover Repositories

Search across tracked repositories by name or description